When OpenAI released ChatGPT, it became obvious – and very fast – that we were entering a new era of AI. Every tech company scrambled to release a comparable service or to infuse their products with some form of GenAI. Microsoft, piggybacking on its investment in OpenAI was the fastest to market with impressive text and image generation for the mainstream. Copilot is now embedded across its software, including Microsoft 365, Teams, GitHub, and Dynamics to supercharge the productivity of developers and knowledge workers. However, the race is on – AWS and Google are actively developing their own GenAI capabilities.

AWS Catches Up as Enterprise Gains Importance

Without a consumer-facing AI assistant, AWS was less visible during the early stages of the GenAI boom. They have since rectified this with a USD 4B investment into Anthropic, the makers of Claude. This partnership will benefit both Amazon and Anthropic, bringing the Claude 3 family of models to enterprise customers, hosted on AWS infrastructure.

As GenAI quickly emerges from shadow IT to an enterprise-grade tool, AWS is catching up by capitalising on their position as cloud leader. Many organisations view AWS as a strategic partner, already housing their data, powering critical applications, and providing an environment that developers are accustomed to. The ability to augment models with private data already residing in AWS data repositories will make it an attractive GenAI partner.

AWS has announced the general availability of Amazon Q, their suite of GenAI tools aimed at developers and businesses. Amazon Q Developer expands on what was launched as Code Whisperer last year. It helps developers accelerate the process of building, testing, and troubleshooting code, allowing them to focus on higher-value work. The tool, which can directly integrate with a developer’s chosen IDE, uses NLP to develop new functions, modernise legacy code, write security tests, and explain code.

Amazon Q Business is an AI assistant that can safely ingest an organisation’s internal data and connect with popular applications, such as Amazon S3, Salesforce, Microsoft Exchange, Slack, ServiceNow, and Jira. Access controls can be implemented to ensure data is only shared with authorised users. It leverages AWS’s visualisation tool, QuickSight, to summarise findings. It also integrates directly with applications like Slack, allowing users to query it directly.

Going a step further, Amazon Q Apps (in preview) allows employees to build their own lightweight GenAI apps using natural language. These employee-created apps can then be published to an enterprise’s app library for broader use. This no-code approach to development and deployment is part of a drive to use AI to increase productivity across lines of business.

AWS continues to expand on Bedrock, their managed service providing access to foundational models from companies like Mistral AI, Stability AI, Meta, and Anthropic. The service also allows customers to bring their own model in cases where they have already pre-trained their own LLM. Once a model is selected, organisations can extend its knowledge base using Retrieval-Augmented Generation (RAG) to privately access proprietary data. Models can also be refined over time to improve results and offer personalised experiences for users. Another feature, Agents for Amazon Bedrock, allows multi-step tasks to be performed by invoking APIs or searching knowledge bases.

To address AI safety concerns, Guardrails for Amazon Bedrock is now available to minimise harmful content generation and avoid negative outcomes for users and brands. Contentious topics can be filtered by varying thresholds, and Personally Identifiable Information (PII) can be masked. Enterprise-wide policies can be defined centrally and enforced across multiple Bedrock models.

Google Targeting Creators

Due to the potential impact on their core search business, Google took a measured approach to entering the GenAI field, compared to newer players like OpenAI and Perplexity. The useability of Google’s chatbot, Gemini, has improved significantly since its initial launch under the moniker Bard. Its image generator, however, was pulled earlier this year while it works out how to carefully tread the line between creativity and sensitivity. Based on recent demos though, it plans to target content creators with images (Imagen 3), video generation (Veo), and music (Lyria).

Like Microsoft, Google has seen that GenAI is a natural fit for collaboration and office productivity. Gemini can now assist the sidebar of Workspace apps, like Docs, Sheets, Slides, Drive, Gmail, and Meet. With Google Search already a critical productivity tool for most knowledge workers, it is determined to remain a leader in the GenAI era.

At their recent Cloud Next event, Google announced the Gemini Code Assist, a GenAI-powered development tool that is more robust than its previous offering. Using RAG, it can customise suggestions for developers by accessing an organisation’s private codebase. With a one-million-token large context window, it also has full codebase awareness making it possible to make extensive changes at once.

The Hardware Problem of AI

The demands that GenAI places on compute and memory have created a shortage of AI chips, causing the valuation of GPU giant, NVIDIA, to skyrocket into the trillions of dollars. Though the initial training is most hardware-intensive, its importance will only rise as organisations leverage proprietary data for custom model development. Inferencing is less compute-heavy for early use cases, such as text generation and coding, but will be dwarfed by the needs of image, video, and audio creation.

Realising compute and memory will be a bottleneck, the hyperscalers are looking to solve this constraint by innovating with new chip designs of their own. AWS has custom-built specialised chips – Trainium2 and Inferentia2 – to bring down costs compared to traditional compute instances. Similarly, Microsoft announced the Maia 100, which it developed in conjunction with OpenAI. Google also revealed its 6th-generation tensor processing unit (TPU), Trillium, with significant increase in power efficiency, high bandwidth memory capacity, and peak compute performance.

The Future of the GenAI Landscape

As enterprises gain experience with GenAI, they will look to partner with providers that they can trust. Challenges around data security, governance, lineage, model transparency, and hallucination management will all need to be resolved. Additionally, controlling compute costs will begin to matter as GenAI initiatives start to scale. Enterprises should explore a multi-provider approach and leverage specialised data management vendors to ensure a successful GenAI journey.

As tech providers such as Microsoft enhance their capabilities and products, they will impact business processes and technology skills, and influence other tech providers to reshape their product and service offerings. Microsoft recently organised briefing sessions in Sydney and Singapore, to present their future roadmap, with a focus on their AI capabilities.

Ecosystm Advisors Achim Granzen, Peter Carr, and Tim Sheedy provide insights on Microsoft’s recent announcements and messaging.

Click here to download Ecosystm VendorSphere: Microsoft’s AI Vision – Initiatives & Impact

Ecosystm Question: What are your thoughts on Microsoft Copilot?

Tim Sheedy. The future of GenAI will not be about single LLMs getting bigger and better – it will be about the use of multiple large and small language models working together to solve specific challenges. It is wasteful to use a large and complex LLM to solve a problem that is simpler. Getting these models to work together will be key to solving industry and use case specific business and customer challenges in the future. Microsoft is already doing this with Microsoft 365 Copilot.

Achim Granzen. Microsoft’s Copilot – a shrink-wrapped GenAI tool based on OpenAI – has become a mainstream product. Microsoft has made it available to their enterprise clients in multiple ways: for personal productivity in Microsoft 365, for enterprise applications in Dynamics 365, for developers in Github and Copilot Studio, and to partners to integrate Copilot into their applications suites (E.g. Amdocs’ Customer Engagement Platform).

Ecosystm Question: How, in your opinion, is the Microsoft Copilot a game changer?

Microsoft’s Customer Copyright Commitment, initially launched as Copilot Copyright Commitment, is the true game changer.

Achim Granzen. It safeguards Copilot users from potential copyright infringement lawsuits related to data used for algorithm training or output results. In November 2023, Microsoft expanded its scope to cover commercial usage of their OpenAI interface as well.

This move not only protects commercial clients using Microsoft’s GenAI products but also extends to any GenAI solutions built by their clients. This initiative significantly reduces a key risk associated with GenAI adoption, outlined in the product terms and conditions.

However, compliance with a set of Required Mitigations and Codes of Conduct is necessary for clients to benefit from this commitment, aligning with responsible AI guidelines and best practices.

Ecosystm Question: Where will organisations need most help on their AI journeys?

Peter Carr. Unfortunately, there is no playbook for AI.

- The path to integrating AI into business strategies and operations lacks a one-size-fits-all guide. Organisations will have to navigate uncharted territories for the time being. This means experimenting with AI applications and learning from successes and failures. This exploratory approach is crucial for leveraging AI’s potential while adapting to unique organisational challenges and opportunities. So, companies that are better at agile innovation will do better in the short term.

- The effectiveness of AI is deeply tied to the availability and quality of connected data. AI systems require extensive datasets to learn and make informed decisions. Ensuring data is accessible, clean, and integrated is fundamental for AI to accurately analyse trends, predict outcomes, and drive intelligent automation across various applications.

Ecosystm Question: What advice would you give organisations adopting AI?

Tim Sheedy. It is all about opportunities and responsibility.

- There is a strong need for responsible AI – at a global level, at a country level, at an industry level and at an organisational level. Microsoft (and other AI leaders) are helping to create responsible AI systems that are fair, reliable, safe, private, secure, and inclusive. There is still a long way to go, but these capabilities do not completely indemnify users of AI. They still have a responsibility to set guardrails in their own businesses about the use and opportunities for AI.

- AI and hybrid work are often discussed as different trends in the market, with different solution sets. But in reality, they are deeply linked. AI can help enhance and improve hybrid work in businesses – and is a great opportunity to demonstrate the value of AI and tools such as Copilot.

Ecosystm Question: What should Microsoft focus on?

Tim Sheedy. Microsoft faces a challenge in educating the market about adopting AI, especially Copilot. They need to educate business, IT, and AI users on embracing AI effectively. Additionally, they must educate existing partners and find new AI partners to drive change in their client base. Success in the race for knowledge workers requires not only being first but also helping users maximise solutions. Customers have limited visibility of Copilot’s capabilities, today. Improving customer upskilling and enhancing tools to prompt users to leverage capabilities will contribute to Microsoft’s (or their competitors’) success in dominating the AI tool market.

Peter Carr. Grassroots businesses form the economic foundation of the Asia Pacific economies. Typically, these businesses do not engage with global SIs (GSIs), which drive Microsoft’s new service offerings. This leads to an adoption gap in the sector that could benefit most from operational efficiencies. To bridge this gap, Microsoft must empower non-GSI partners and managed service providers (MSPs) at the local and regional levels. They won’t achieve their goal of democratising AI, unless they do. Microsoft has the potential to advance AI technology while ensuring fair and widespread adoption.

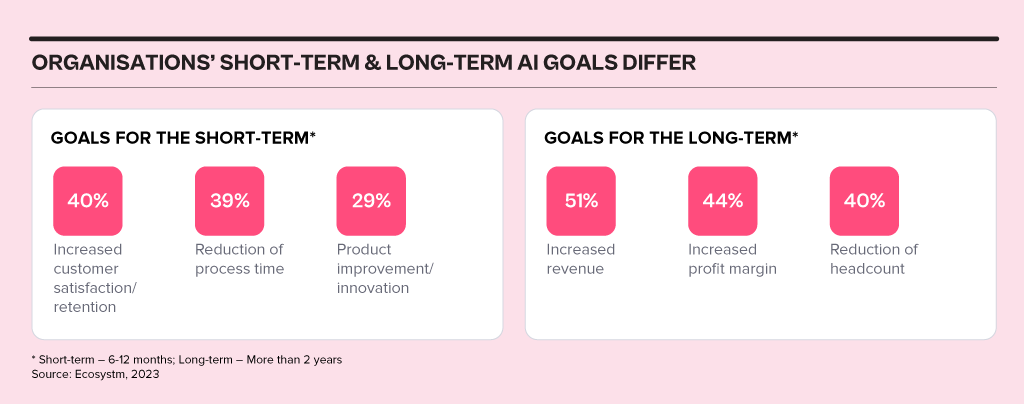

In 2024, business and technology leaders will leverage the opportunity presented by the attention being received by Generative AI engines to test and integrate AI comprehensively across the business. Many organisations will prioritise the alignment of their initial Generative AI initiatives with broader AI strategies, establishing distinct short-term and long-term goals for their AI investments.

AI adoption will influence business processes, technology skills, and, in turn, reshape the product/service offerings of AI providers.

Ecosystm analysts Achim Granzen, Peter Carr, Richard Wilkins, Tim Sheedy, and Ullrich Loeffler present the top 5 AI trends in 2024.

Click here to download ‘Ecosystm Predicts: Top 5 AI Trends in 2024.

#1 By the End of 2024, Gen AI Will Become a ‘Hygiene Factor’ for Tech Providers

AI has widely been commended as the ‘game changer’ that will create and extend the divide between adopters and laggards and be the deciding factor for success and failure.

Cutting through the hype, strategic adoption of AI is still at a nascent stage and 2024 will be another year where companies identify use cases, experiment with POCs, and commit renewed efforts to get their data assets in order.

The biggest impact of AI will be derived from integrated AI capability in standard packaged software and products – and this will include Generative AI. We will see a plethora of product releases that seamlessly weave Generative AI into everyday tools generating new value through increased efficiency and user-friendliness.

Technology will be the first industry where AI becomes the deciding factor between success and failure; tech providers will be forced to deliver on their AI promises or be left behind.

#2 Gen AI Will Disrupt the Role of IT Architects

Traditionally, IT has relied on three-tier architectures for applications, that faced limitations in scalability and real-time responsiveness. The emergence of microservices, containerisation, and serverless computing has paved the way for event-driven designs, a paradigm shift that decouples components and use events like user actions or data updates as triggers for actions across distributed services. This approach enhances agility, scalability, and flexibility in the system.

The shift towards event-driven designs and advanced architectural patterns presents a compelling challenge for IT Architects, as traditionally their role revolved around designing, planning and overseeing complex systems.

Generative AI is progressively demonstrating capabilities in architectural design through pattern recognition, predictive analytics, and automated decision-making.

With the adoption of Generative AI, the role of an IT Architect will change into a symbiotic relationship where human expertise collaborates with AI insights.

#3 Gen AI Adoption Will be Confined to Specific Use Cases

A little over a year ago, a new era in AI began with the initial release of OpenAI’s ChatGPT. Since then, many organisations have launched Generative AI pilots.

In its second-year enterprises will start adoption – but in strictly defined and limited use cases. Examples such as Microsoft Copilot demonstrate an early adopter route. While productivity increases for individuals can be significant, its enterprise impact is unclear (at this time).

But there are impactful use cases in enterprise knowledge and document management. Organisations across industries have decades (or even a century) of information, including digitised documents and staff expertise. That treasure trove of information can be made accessible through cognitive search and semantic answering, driven by Generative AI.

Generative AI will provide organisations with a way to access, distill, and create value out of that data – a task that may well be impossible to achieve in any other way.

#4 Gen AI Will Get Press Inches; ‘Traditional’ AI Will Do the Hard Work

While the use cases for Generative AI will continue to expand, the deployment models and architectures for enterprise Generative AI do not add up – yet.

Running Generative AI in organisations’ data centres is costly and using public models for all but the most obvious use cases is too risky. Most organisations opt for a “small target” strategy, implementing Generative AI in isolated use cases within specific processes, teams, or functions. Justifying investment in hardware, software, and services for an internal AI platform is challenging when the payback for each AI initiative is not substantial.

“Traditional AI/ML” will remain the workhorse, with a significant rise in use cases and deployments. Organisations are used to investing for AI by individual use cases. Managing process change and training is also more straightforward with traditional AI, as the changes are implemented in a system or platform, eliminating the need to retrain multiple knowledge workers.

#5 AI Will Pioneer a 21st Century BPM Renaissance

As we near the 25-year milestone of the 21st century, it becomes clear that many businesses are still operating with 20th-century practices and philosophies.

AI, however, represents more than a technological breakthrough; it offers a new perspective on how businesses operate and is akin to a modern interpretation of Business Process Management (BPM). This development carries substantial consequences for digital transformation strategies. To fully exploit the potential of AI, organisations need to commit to an extensive and ongoing process spanning the collection, organisation, and expansion of data, to integrating these insights at an application and workflow level.

The role of AI will transcend technological innovation, becoming a driving force for substantial business transformation. Sectors that specialise in workflow, data management, and organisational transformation are poised to see the most growth in 2024 because of this shift.

Google recently extended its Generative AI, Bard, to include coding in more than 20 programming languages, including C++, Go, Java, Javascript, and Python. The search giant has been eager to respond to last year’s launch of ChatGPT but as the trusted incumbent, it has naturally been hesitant to move too quickly. The tendency for large language models (LLMs) to produce controversial and erroneous outputs has the potential to tarnish established brands. Google Bard was released in March in the US and the UK as an LLM but lacked the coding ability of OpenAI’s ChatGPT and Microsoft’s Bing Chat.

Bard’s new features include code generation, optimisation, debugging, and explanation. Using natural language processing (NLP), users can explain their requirements to the AI and ask it to generate code that can then be exported to an integrated development environment (IDE) or executed directly in the browser with Google Colab. Similarly, users can request Bard to debug already existing code, explain code snippets, or optimise code to improve performance.

Google continues to refer to Bard as an experiment and highlights that as is the case with generated text, code produced by the AI may not function as expected. Regardless, the new functionality will be useful for both beginner and experienced developers. Those learning to code can use Generative AI to debug and explain their mistakes or write simple programs. More experienced developers can use the tool to perform lower-value work, such as commenting on code, or scaffolding to identify potential problems.

GitHub Copilot X to Face Competition

While the ability for Bard, Bing, and ChatGPT to generate code is one of their most important use cases, developers are now demanding AI directly in their IDEs.

In March, Microsoft made one of its most significant announcements of the year when it demonstrated GitHub Copilot X, which embeds GPT-4 in the development environment. Earlier this year, Microsoft invested $10 billion into OpenAI to add to the $1 billion from 2019, cementing the partnership between the two AI heavyweights. Among other benefits, this agreement makes Azure the exclusive cloud provider to OpenAI and provides Microsoft with the opportunity to enhance its software with AI co-pilots.

Currently, under technical preview, when Copilot X eventually launches, it will integrate into Visual Studio — Microsoft’s IDE. Presented as a sidebar or chat directly in the IDE, Copilot X will be able to generate, explain, and comment on code, debug, write unit tests, and identify vulnerabilities. The “Hey, GitHub” functionality will allow users to chat using voice, suitable for mobile users or more natural interaction on a desktop.

Not to be outdone by its cloud rivals, in April, AWS announced the general availability of what it describes as a real-time AI coding companion. Amazon CodeWhisperer, integrates with a range of IDEs, namely Visual Studio Code, IntelliJ IDEA, CLion, GoLand, WebStorm, Rider, PhpStorm, PyCharm, RubyMine, and DataGrip, or natively in AWS Cloud9 and AWS Lambda console. While the preview worked for Python, Java, JavaScript, TypeScript, and C#, the general release extends support for most languages. Amazon’s key differentiation is that it is available for free to individual users, while GitHub Copilot is currently subscription-based with exceptions only for teachers, students, and maintainers of open-source projects.

The Next Step: Generative AI in Security

The next battleground for Generative AI will be assisting overworked security analysts. Currently, some of the greatest challenges that Security Operations Centres (SOCs) face are being understaffed and overwhelmed with the number of alerts. Security vendors, such as IBM and Securonix, have already deployed automation to reduce alert noise and help analysts prioritise tasks to avoid responding to false threats.

Google recently introduced Sec-PaLM and Microsoft announced Security Copilot, bringing the power of Generative AI to the SOC. These tools will help analysts interact conversationally with their threat management systems and will explain alerts in natural language. How effective these tools will be is yet to be seen, considering hallucinations in security is far riskier than writing an essay with ChatGPT.

The Future of AI Code Generators

Although GitHub Copilot and Amazon CodeWhisperer had already launched with limited feature sets, it was the release of ChatGPT last year that ushered in a new era in AI code generation. There is now a race between the cloud hyperscalers to win over developers and to provide AI that supports other functions, such as security.

Despite fears that AI will replace humans, in their current state it is more likely that they will be used as tools to augment developers. Although AI and automated testing reduce the burden on the already stretched workforce, humans will continue to be in demand to ensure code is secure and satisfies requirements. A likely scenario is that with coding becoming simpler, rather than the number of developers shrinking, the volume and quality of code written will increase. AI will generate a new wave of citizen developers able to work on projects that would previously have been impossible to start. This may, in turn, increase demand for developers to build on these proofs-of-concept.

How the Generative AI landscape evolves over the next year will be interesting. In a recent interview, OpenAI’s founder, Sam Altman, explained that the non-profit model it initially pursued is not feasible, necessitating the launch of a capped-for-profit subsidiary. The company retains its values, however, focusing on advancing AI responsibly and transparently with public consultation. The appearance of Microsoft, Google, and AWS will undoubtedly change the market dynamics and may force OpenAI to at least reconsider its approach once again.

Microsoft’s intention to invest a further USD 10B in OpenAI – the owner of ChatGPT and Dall-E2 confirms what we said in the Ecosystm Predicts – Cloud will be replaced by AI as the right transformation goal. Microsoft has already invested an estimated USD 3B in the company since 2019. Let’s take a look at what this means to the tech industry.

Implications for OpenAI & Microsoft

OpenAI’s tools – such as ChatGPT and the image engine Dell-E2 – require significant processing power to operate, particularly as they move beyond beta programs and offer services at scale. In a single week in December, the company moved past 1 million users for ChatGPT alone. The company must be burning through cash at a significant rate. This means they need significant funding to keep the lights on, particularly as the capability of the product continues to improve and the amount of data, images and content it trawls continues to expand. ChatGPT is being talked about as one of the most revolutionary tech capabilities of the decade – but it will be all for nothing if the company doesn’t have the resources to continue to operate!

This is huge for Microsoft! Much has already been discussed about the opportunity for Microsoft to compete with Google more effectively for search-related advertising dollars. But every product and service that Microsoft develops can be enriched and improved by ChatGPT:

- A spreadsheet tool that automatically categorises data and extract insight

- A word processing tool that creates content automatically

- A CRM that creates custom offers for every individual customer based on their current circumstances

- A collaboration tool that gets answers to questions before they are even asked and acts on the insights and analytics that it needs to drive the right customer and business outcomes

- A presentation tool that creates slides with compelling storylines based on the needs of specific audiences

- LinkedIn providing the insights users need to achieve their outcomes

- A cloud-based AI engine that can be embedded into any process or application through a simple API call (this already exists!)

How Microsoft chooses to monetise these opportunities is up to the company – but the investment certainly puts Microsoft in the box seat to monetise the AI services through their own products while also taking a cut from other ways that OpenAI monetises their services.

Impact on Microsoft’s competitors

Microsoft’s investment in OpenAI will accelerate the rate of AI development and adoption. As we move into the AI era, everything will change. New business opportunities will emerge, and traditional ones will disappear. Markets will be created and destroyed. Microsoft’s investment is an attempt for the company to end up on the right side of this equation. But the other existing (and yet to be created) AI businesses won’t just give up. The Microsoft investment will create a greater urgency for Google, Apple, and others to accelerate their AI capabilities and investments. And we will see investments in OpenAI’s competitors, such as Stability AI (which raised USD 101M in October 2022).

What will change for enterprises?

Too many businesses have put “the cloud” at the centre of their transformation strategies – as if being in the cloud is an achievement in itself. While cloud made applications and processes are easier to transform (and sometimes cheaper to deploy and run), for many businesses, they have just modernised their legacy end-to-end business processes on a better platform. True transformation happens when businesses realise that their processes only existed because they of lack of human or technology capacity to treat every customer and employee as an individual, to determine their specific needs and to deliver a custom solution for them. Not to mention the huge cost of creating unique processes for every customer! But AI does this.

AI engines have the ability to make businesses completely rethink their entire application stack. They have the ability to deliver unique outcomes for every customer. Businesses need to have AI as their transformation goal – where they put intelligence at the centre of every transformation, they will make different decisions and drive better customer and business outcomes. But once again, delivering this will take significant processing power and access to huge amounts of content and data.

The Burning Question: Who owns the outcome of AI?

In the end, ChatGPT only knows what it knows – and the content that it learns from is likely to have been created by someone (ideally – as we don’t want AI to learn from bad AI!). What we don’t really understand is the unintended consequences of commercialising AI. Will content creators be less willing to share their content? Will we see the emergence of many more walled content gardens? Will blockchain and even NFTs emerge as a way of protecting and proving origin? Will legislation protect content creators or AI engines? If everyone is using AI to create content, will all content start to look more similar (as this will be the stage that the AI is learning from content created by AI)? And perhaps the biggest question of all – where does the human stop and the machine start?

These questions will need answers and they are not going to be answered in advance. Whatever the answers might be, we are definitely at the beginning of the next big shift in human-technology relations. Microsoft wants to accelerate this shift. As a technology analyst, 2023 just got a lot more interesting!