The promise of AI agents – intelligent programs or systems that autonomously perform tasks on behalf of people or systems – is enormous. These systems will augment and replace human workers, offering intelligence far beyond the simple RPA (Robotic Process Automation) bots that have become commonplace in recent years.

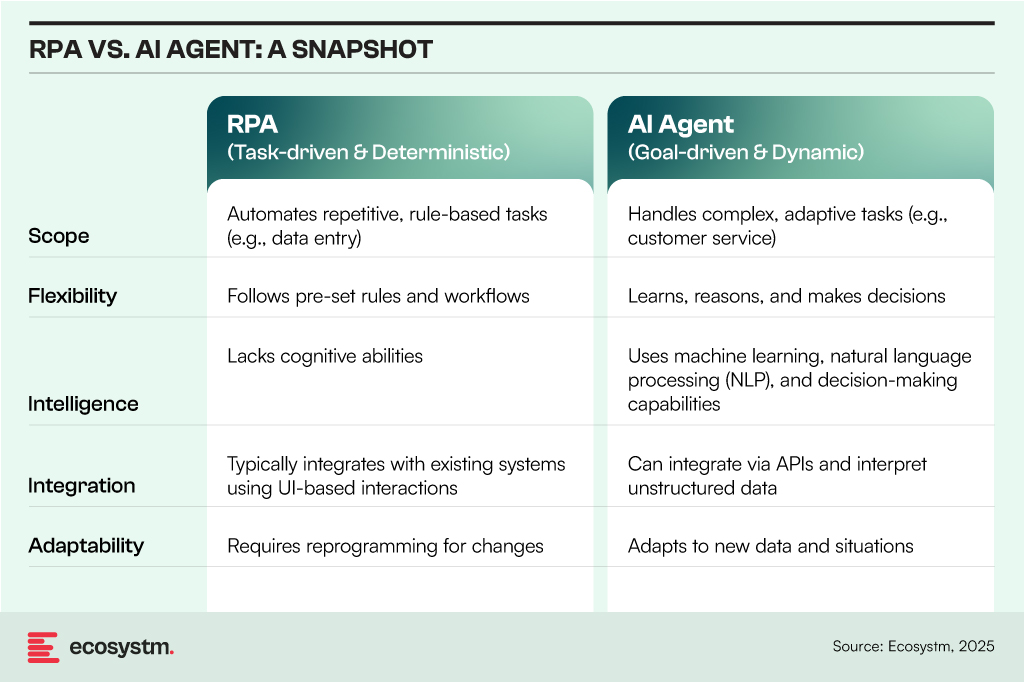

RPA and AI Agents both automate tasks but differ in scope, flexibility, and intelligence:

7 Lessons for AI Agents: Insights from RPA Deployments

However, in many ways, RPA and AI agents are similar – they both address similar challenges, albeit with different levels of automation and complexity. RPA adoption has shown that uncontrolled deployment leads to chaos, requiring a balance of governance, standardisation, and ongoing monitoring. The same principles apply to AI agent management, but with greater complexity due to AI’s dynamic and learning-based nature.

By learning from RPA’s mistakes, organisations can ensure AI agents deliver sustainable value, remain secure, and operate efficiently within a governed and well-managed environment.

#1 Controlling Sprawl with Centralised Governance

A key lesson from RPA adoption is that many organisations deployed RPA bots without a clear strategy, resulting in uncontrolled sprawl, duplicate bots, and fragmented automation efforts. This lack of oversight led to the rise of shadow IT practices, where business units created their own bots without proper IT involvement, further complicating the automation landscape and reducing overall effectiveness.

Application to AI Agents:

- Establish centralised governance early, ensuring alignment between IT and business units.

- Implement AI agent registries to track deployments, functions, and ownership.

- Enforce consistent policies for AI deployment, access, and version control.

#2 Standardising Development and Deployment

Bot development varied across teams, with different toolsets being used by different departments. This often led to poorly documented scripts, inconsistent programming standards, and difficulties in maintaining bots. Additionally, rework and inefficiencies arose as teams developed redundant bots, further complicating the automation process and reducing overall effectiveness.

Application to AI Agents:

- Standardise frameworks for AI agent development (e.g., predefined APIs, templates, and design patterns).

- Use shared models and foundational capabilities instead of building AI agents from scratch for each use case.

- Implement code repositories and CI/CD pipelines for AI agents to ensure consistency and controlled updates.

#3 Balancing Citizen Development with IT Control

Business users, or citizen developers, created RPA bots without adhering to IT best practices, resulting in security risks, inefficiencies, and technical debt. As a result, IT teams faced challenges in tracking and supporting business-driven automation efforts, leading to a lack of oversight and increased complexity in maintaining these bots.

Application to AI Agents:

- Empower business users to build and customise AI agents but within controlled environments (e.g., low-code/no-code platforms with governance layers).

- Implement AI sandboxes where experimentation is allowed but requires approval before production deployment.

- Establish clear roles and responsibilities between IT, AI governance teams, and business users.

#4 Proactive Monitoring and Maintenance

Organisations often underestimated the effort required to maintain RPA bots, resulting in failures when process changes, system updates, or API modifications occurred. As a result, bots frequently stopped working without warning, disrupting business processes and leading to unanticipated downtime and inefficiencies. This lack of ongoing maintenance and adaptation to evolving systems contributed to significant operational disruptions.

Application to AI Agents:

- Implement continuous monitoring and logging for AI agent activities and outputs.

- Develop automated retraining and feedback loops for AI models to prevent performance degradation.

- Create AI observability dashboards to track usage, drift, errors, and security incidents.

#5 Security, Compliance, and Ethical Considerations

Insufficient security measures led to data leaks and access control issues, with bots operating under overly permissive settings. Also, a lack of proactive compliance planning resulted in serious regulatory concerns, particularly within industries subject to stringent oversight, highlighting the critical need for integrating security and compliance considerations from the outset of automation deployments.

Application to AI Agents:

- Enforce role-based access control (RBAC) and least privilege access to ensure secure and controlled usage.

- Integrate explainability and auditability features to comply with regulations like GDPR and emerging AI legislation.

- Develop an AI ethics framework to address bias, ensure decision-making transparency, and uphold accountability.

#6 Cost Management and ROI Measurement

Initial excitement led to unchecked RPA investments, but many organisations struggled to measure the ROI of bots. As a result, some RPA bots became cost centres, with high maintenance costs outweighing the benefits they initially provided. This lack of clear ROI often hindered organisations from realising the full potential of their automation efforts.

Application to AI Agents:

- Define success metrics for AI agents upfront, tracking impact on productivity, cost savings, and user experience.

- Use AI workload optimisation tools to manage computing costs and avoid overconsumption of resources.

- Regularly review AI agents’ utility and retire underperforming ones to avoid AI bloat.

#7 Human Oversight and Hybrid Workflows

The assumption that bots could fully replace humans led to failures in situations where exceptions, judgment, or complex decision-making were necessary. Bots struggled to handle scenarios that required nuanced thinking or flexibility, often leading to errors or inefficiencies. The most successful implementations, however, blended human and bot collaboration, leveraging the strengths of both to optimise processes and ensure that tasks were handled effectively and accurately.

Application to AI Agents:

- Integrate AI agents into human-in-the-loop (HITL) systems, allowing humans to provide oversight and validate critical decisions.

- Establish AI escalation paths for situations where agents encounter ambiguity or ethical concerns.

- Design AI agents to augment human capabilities, rather than fully replace roles.

The lessons learned from RPA’s journey provide valuable insights for navigating the complexities of AI agent deployment. By addressing governance, standardisation, and ethical considerations, organisations

can shift from reactive problem-solving to a more strategic approach, ensuring AI tools deliver value while operating within a responsible, secure, and efficient framework.

Over the next few months, we’re going to be focusing on the five stages of the Digital Value Journey and how an organisation and a leadership team can progress through these stages.

The Journey starts with baselining and then improving your execution capability.

Your Foundation

The quality of your execution needs to be consistently improved as you and your organisation make progress through your Journey. At the start, it is about developing the foundation on which you can build more complex and valuable business capabilities. You need that reliable, performing foundation that delivers what your customers and employees need every day.

For any Digital or IT organisation to succeed, it needs two critical things: a record of consistent success, and the trust from your organisation that past performance is a reliable predictor of the future.

Every day your customers and employees will be evaluating those two things. It’s like sitting a difficult exam every day. Failing one will be remembered – much more so than all the other exams that you successfully passed. To pass that exam you need to clearly understand what people are testing you on every day.

Understanding how the quality of your work will be evaluated is essential to creating and maintaining this firm foundation. Everything you do later in the Journey will depend on the foundation you establish at the start.

Evaluating your Foundation

And you don’t have the freedom to choose how that foundation is going to be evaluated. There is a universe – or perhaps that should be a multiverse – of metrics that you could use. Selecting the small set of metrics that your stakeholders believe demonstrate your performance is essential.

Too many metrics is just as bad as too few – you won’t know which ones to focus on so you will end up spreading yourself too thinly. Your stakeholders are the best people to tell you how to measure your performance. Work with them to determine the one or two indicators that they really care about.

Schools and universities have improved dramatically in explaining how they are going to evaluate a student’s performance since I attended! As part of almost every assignment, educators include an evaluation rubric to guide each student. This sets out what how the educator will assess the students work. So, getting great grades is dependent on understanding this rubric.

What is your rubric? Without one how will you know what outcomes your stakeholders value and how they will evaluate your work? Spending time to talk with your stakeholders about what they value, is a habit that is worth developing early and maintaining throughout the Journey.

Your Next Best Steps

Getting this execution foundation right is the basis for everything that follows.

In the first of a series of webinars on 22 April, we will be introducing the concepts and ideas that we will expand on over the series.

Getting a quality education is a long hard slog – and I can’t promise that starting this Digital Journey will be any different. At each stage of the Journey different levels of capability will be required, but, as with an education, these higher levels of capability will only be possible if the right foundation is in place. So, join us to see how you can use the Digital Value Journey to lift the performance of your organisation and its Digital or IT Team.

How do digital leaders shift from providing a cost-focused to a value-focused service? At the CXO Digital Leaders Dialogue series, together with Best Case Scenario, we will be in discussion with leaders who will share their experiences with navigating these challenges.

To learn more, or to register to attend, visit here