In AI’s early days, enterprise leaders asked a straightforward question: “What can this automate?” The focus was on speed, scale, and efficiency and AI delivered. But that question is evolving. Now, the more urgent ask is: “Can this AI understand people?”

This shift – from automation to emotional intelligence – isn’t just theoretical. It’s already transforming how organisations connect with customers, empower employees, and design digital experiences. We’re shifting to a phase of humanised AI – systems that don’t just respond accurately, but intuitively, with sensitivity to mood, tone, and need.

One of the most unexpected, and revealing, AI use cases is therapy. Millions now turn to AI chat tools to manage anxiety, process emotions, and share deeply personal thoughts. What started as fringe behaviour is fast becoming mainstream. This emotional turn isn’t a passing trend; it marks a fundamental shift in how people expect technology to relate to them.

For enterprises, this raises a critical challenge: If customers are beginning to turn to AI for emotional support, what kind of relationship do they expect from it? And what does it take to meet that expectation – not just effectively, but responsibly, and at scale?

The Rise of Chatbot Therapy

Therapy was never meant to be one of AI’s first mass-market emotional use cases; and yet, here we are.

Apps like Wysa, Serena, and Youper have been quietly reshaping the digital mental health landscape for years, offering on-demand support through chatbots. Designed by clinicians, these tools draw on established methods like Cognitive Behavioural Therapy (CBT) and mindfulness to help users manage anxiety, depression, and stress. The conversations are friendly, structured, and often, surprisingly helpful.

But something even more unexpected is happening; people are now using general-purpose AI tools like ChatGPT for therapeutic support, despite them not being designed for it. Increasingly, users are turning to ChatGPT to talk through emotions, navigate relationship issues, or manage daily stress. Reddit threads and social posts describe it being used as a therapist or sounding board. This isn’t Replika or Wysa, but a general AI assistant being shaped into a personal mental health tool purely through user behaviour.

This shift is driven by a few key factors. First, access. Traditional therapy is expensive, hard to schedule, and for many, emotionally intimidating. AI, on the other hand, is always available, listens without judgement, and never gets tired.

Tone plays a big role too. Thanks to advances in reinforcement learning and tone conditioning, models like ChatGPT are trained to respond with calm, non-judgmental empathy. The result feels emotionally safe; a rare and valuable quality for those facing anxiety, isolation, or uncertainty. A recent PLOS study found that not only did participants struggle to tell human therapists apart from ChatGPT, they actually rated the AI responses as more validating and empathetic.

And finally, and perhaps surprisingly, is trust. Unlike wellness apps that push subscriptions or ads, AI chat feels personal and agenda-free. Users feel in control of the interaction – no small thing in a space as vulnerable as mental health.

None of this suggests AI should replace professional care. Risks like dependency, misinformation, or reinforcing harmful patterns are real. But it does send a powerful signal to enterprise leaders: people now expect digital systems to listen, care, and respond with emotional intelligence.

That expectation is changing how organisations design experiences – from how a support bot speaks to customers, to how an internal wellness assistant checks in with employees during a tough week. Humanised AI is no longer a niche feature of digital companions. It’s becoming a UX standard; one that signals care, builds trust, and deepens relationships.

Digital Companionship as a Solution for Support

Ten years ago, talking to your AI meant asking Siri to set a reminder. Today, it might mean sharing your feelings with a digital companion, seeking advice from a therapy chatbot, or even flirting with a virtual persona! This shift from functional assistant to emotional companion marks more than a technological leap. It reflects a deeper transformation in how people relate to machines.

One of the earliest examples of this is Replika, launched in 2017, which lets users create personalised chatbot friends or romantic partners. As GenAI advanced, so did Replika’s capabilities, remembering past conversations, adapting tone, even exchanging voice messages. A Nature study found that 90% of Replika users reported high levels of loneliness compared to the general population, but nearly half said the app gave them a genuine sense of social support.

Replika isn’t alone. In China, Xiaoice (spun off from Microsoft in 2020) has hundreds of millions of users, many of whom chat with it daily for companionship. In elder care, ElliQ, a tabletop robot designed for seniors has shown striking results: a report from New York State’s Office for the Aging cited a 95% drop in loneliness among participants.

Even more freeform platforms like Character.AI, where users converse with AI personas ranging from historical figures to fictional characters, are seeing explosive growth. People are spending hours in conversation – not to get things done, but to feel seen, inspired, or simply less alone.

The Technical Leap: What Has Changed Since the LLM Explosion

The use of LLMs for code editing and content creation is already mainstream in most enterprises but use cases have expanded alongside the capabilities of new models. LLMs now have the capacity to act more human – to carry emotional tone, remember user preferences, and maintain conversational continuity.

Key advances include:

- Memory. Persistent context and long-term recall

- Reinforcement Learning from Human Feedback (RLHF). Empathy and safety by design

- Sentiment and Emotion Recognition. Reading mood from text, voice, and expression

- Role Prompting. Personas using brand-aligned tone and behaviour

- Multimodal Interaction. Combining text, voice, image, gesture, and facial recognition

- Privacy-Sensitive Design. On-device inference, federated learning, and memory controls

Enterprise Implications: Emotionally Intelligent AI in Action

The examples shared might sound fringe or futuristic, but they reveal something real: people are now open to emotional interaction with AI. And that shift is creating ripple effects. If your customer service chatbot feels robotic, it pales in comparison to the AI friend someone chats with on their commute. If your HR wellness bot gives stock responses, it may fall flat next to the AI that helped a user through a panic attack the night before.

The lesson for enterprises isn’t to mimic friendship or romance, but to recognise the rising bar for emotional resonance. People want to feel understood. Increasingly, they expect that even from machines.

For enterprises, this opens new opportunities to tap into both emotional intelligence and public comfort with humanised AI. Emerging use cases include:

- Customer Experience. AI that senses tone, adapts responses, and knows when to escalate

- Brand Voice. Consistent personality and tone embedded in AI interfaces

- Employee Wellness. Assistants that support mental health, coaching, and daily check-ins

- Healthcare & Elder Care. Companions offering emotional and physical support

- CRM & Strategic Communications. Emotion-aware tools that guide relationship building

Ethical Design and Guardrails

Emotional AI brings not just opportunity, but responsibility. As machines become more attuned to human feelings, ethical complexity grows. Enterprises must ensure transparency – users should always know they’re speaking to a machine. Emotional data must be handled with the same care as health data. Empathy should serve the user, not manipulate them. Healthy boundaries and human fallback must be built in, and organisations need to be ready for regulation, especially in sensitive sectors like healthcare, finance, and education.

Emotional intelligence is no longer just a human skill; it’s becoming a core design principle, and soon, a baseline expectation.

Those who build emotionally intelligent AI with integrity can earn trust, loyalty, and genuine connection at scale. But success won’t come from speed or memory alone – it will come from how the experience makes people feel.

When OpenAI released ChatGPT, it became obvious – and very fast – that we were entering a new era of AI. Every tech company scrambled to release a comparable service or to infuse their products with some form of GenAI. Microsoft, piggybacking on its investment in OpenAI was the fastest to market with impressive text and image generation for the mainstream. Copilot is now embedded across its software, including Microsoft 365, Teams, GitHub, and Dynamics to supercharge the productivity of developers and knowledge workers. However, the race is on – AWS and Google are actively developing their own GenAI capabilities.

AWS Catches Up as Enterprise Gains Importance

Without a consumer-facing AI assistant, AWS was less visible during the early stages of the GenAI boom. They have since rectified this with a USD 4B investment into Anthropic, the makers of Claude. This partnership will benefit both Amazon and Anthropic, bringing the Claude 3 family of models to enterprise customers, hosted on AWS infrastructure.

As GenAI quickly emerges from shadow IT to an enterprise-grade tool, AWS is catching up by capitalising on their position as cloud leader. Many organisations view AWS as a strategic partner, already housing their data, powering critical applications, and providing an environment that developers are accustomed to. The ability to augment models with private data already residing in AWS data repositories will make it an attractive GenAI partner.

AWS has announced the general availability of Amazon Q, their suite of GenAI tools aimed at developers and businesses. Amazon Q Developer expands on what was launched as Code Whisperer last year. It helps developers accelerate the process of building, testing, and troubleshooting code, allowing them to focus on higher-value work. The tool, which can directly integrate with a developer’s chosen IDE, uses NLP to develop new functions, modernise legacy code, write security tests, and explain code.

Amazon Q Business is an AI assistant that can safely ingest an organisation’s internal data and connect with popular applications, such as Amazon S3, Salesforce, Microsoft Exchange, Slack, ServiceNow, and Jira. Access controls can be implemented to ensure data is only shared with authorised users. It leverages AWS’s visualisation tool, QuickSight, to summarise findings. It also integrates directly with applications like Slack, allowing users to query it directly.

Going a step further, Amazon Q Apps (in preview) allows employees to build their own lightweight GenAI apps using natural language. These employee-created apps can then be published to an enterprise’s app library for broader use. This no-code approach to development and deployment is part of a drive to use AI to increase productivity across lines of business.

AWS continues to expand on Bedrock, their managed service providing access to foundational models from companies like Mistral AI, Stability AI, Meta, and Anthropic. The service also allows customers to bring their own model in cases where they have already pre-trained their own LLM. Once a model is selected, organisations can extend its knowledge base using Retrieval-Augmented Generation (RAG) to privately access proprietary data. Models can also be refined over time to improve results and offer personalised experiences for users. Another feature, Agents for Amazon Bedrock, allows multi-step tasks to be performed by invoking APIs or searching knowledge bases.

To address AI safety concerns, Guardrails for Amazon Bedrock is now available to minimise harmful content generation and avoid negative outcomes for users and brands. Contentious topics can be filtered by varying thresholds, and Personally Identifiable Information (PII) can be masked. Enterprise-wide policies can be defined centrally and enforced across multiple Bedrock models.

Google Targeting Creators

Due to the potential impact on their core search business, Google took a measured approach to entering the GenAI field, compared to newer players like OpenAI and Perplexity. The useability of Google’s chatbot, Gemini, has improved significantly since its initial launch under the moniker Bard. Its image generator, however, was pulled earlier this year while it works out how to carefully tread the line between creativity and sensitivity. Based on recent demos though, it plans to target content creators with images (Imagen 3), video generation (Veo), and music (Lyria).

Like Microsoft, Google has seen that GenAI is a natural fit for collaboration and office productivity. Gemini can now assist the sidebar of Workspace apps, like Docs, Sheets, Slides, Drive, Gmail, and Meet. With Google Search already a critical productivity tool for most knowledge workers, it is determined to remain a leader in the GenAI era.

At their recent Cloud Next event, Google announced the Gemini Code Assist, a GenAI-powered development tool that is more robust than its previous offering. Using RAG, it can customise suggestions for developers by accessing an organisation’s private codebase. With a one-million-token large context window, it also has full codebase awareness making it possible to make extensive changes at once.

The Hardware Problem of AI

The demands that GenAI places on compute and memory have created a shortage of AI chips, causing the valuation of GPU giant, NVIDIA, to skyrocket into the trillions of dollars. Though the initial training is most hardware-intensive, its importance will only rise as organisations leverage proprietary data for custom model development. Inferencing is less compute-heavy for early use cases, such as text generation and coding, but will be dwarfed by the needs of image, video, and audio creation.

Realising compute and memory will be a bottleneck, the hyperscalers are looking to solve this constraint by innovating with new chip designs of their own. AWS has custom-built specialised chips – Trainium2 and Inferentia2 – to bring down costs compared to traditional compute instances. Similarly, Microsoft announced the Maia 100, which it developed in conjunction with OpenAI. Google also revealed its 6th-generation tensor processing unit (TPU), Trillium, with significant increase in power efficiency, high bandwidth memory capacity, and peak compute performance.

The Future of the GenAI Landscape

As enterprises gain experience with GenAI, they will look to partner with providers that they can trust. Challenges around data security, governance, lineage, model transparency, and hallucination management will all need to be resolved. Additionally, controlling compute costs will begin to matter as GenAI initiatives start to scale. Enterprises should explore a multi-provider approach and leverage specialised data management vendors to ensure a successful GenAI journey.

GenAI has taken the world by storm, with organisations big and small eager to pilot use cases for automation and productivity boosts. Tech giants like Google, AWS, and Microsoft are offering cloud-based GenAI tools, but the demand is straining current infrastructure capabilities needed for training and deploying large language models (LLMs) like ChatGPT and Bard.

Understanding the Demand for Chips

The microchip manufacturing process is intricate, involving hundreds of steps and spanning up to four months from design to mass production. The significant expense and lengthy manufacturing process for semiconductor plants have led to global demand surpassing supply. This imbalance affects technology companies, automakers, and other chip users, causing production slowdowns.

Supply chain disruptions, raw material shortages (such as rare earth metals), and geopolitical situations have also had a fair role to play in chip shortages. For example, restrictions by the US on China’s largest chip manufacturer, SMIC, made it harder for them to sell to several organisations with American ties. This triggered a ripple effect, prompting tech vendors to start hoarding hardware, and worsening supply challenges.

As AI advances and organisations start exploring GenAI, specialised AI chips are becoming the need of the hour to meet their immense computing demands. AI chips can include graphics processing units (GPUs), application-specific integrated circuits (ASICs), and field-programmable gate arrays (FPGAs). These specialised AI accelerators can be tens or even thousands of times faster and more efficient than CPUs when it comes to AI workloads.

The surge in GenAI adoption across industries has heightened the demand for improved chip packaging, as advanced AI algorithms require more powerful and specialised hardware. Effective packaging solutions must manage heat and power consumption for optimal performance. TSMC, one of the world’s largest chipmakers, announced a shortage in advanced chip packaging capacity at the end of 2023, that is expected to persist through 2024.

The scarcity of essential hardware, limited manufacturing capacity, and AI packaging shortages have impacted tech providers. Microsoft acknowledged the AI chip crunch as a potential risk factor in their 2023 annual report, emphasising the need to expand data centre locations and server capacity to meet customer demands, particularly for AI services. The chip squeeze has highlighted the dependency of tech giants on semiconductor suppliers. To address this, companies like Amazon and Apple are investing heavily in internal chip design and production, to reduce dependence on large players such as Nvidia – the current leader in AI chip sales.

How are Chipmakers Responding?

NVIDIA, one of the largest manufacturers of GPUs, has been forced to pivot its strategy in response to this shortage. The company has shifted focus towards developing chips specifically designed to handle complex AI workloads, such as the A100 and V100 GPUs. These AI accelerators feature specialised hardware like tensor cores optimised for AI computations, high memory bandwidth, and native support for AI software frameworks.

While this move positions NVIDIA at the forefront of the AI hardware race, experts say that it comes at a significant cost. By reallocating resources towards AI-specific GPUs, the company’s ability to meet the demand for consumer-grade GPUs has been severely impacted. This strategic shift has worsened the ongoing GPU shortage, further straining the market dynamics surrounding GPU availability and demand.

Others like Intel, a stalwart in traditional CPUs, are expanding into AI, edge computing, and autonomous systems. A significant competitor to Intel in high-performance computing, AMD acquired Xilinx to offer integrated solutions combining high-performance central processing units (CPUs) and programmable logic devices.

Global Resolve Key to Address Shortages

Governments worldwide are boosting chip capacity to tackle the semiconductor crisis and fortify supply chains. Initiatives like the CHIPS for America Act and the European Chips Act aim to bolster domestic semiconductor production through investments and incentives. Leading manufacturers like TSMC and Samsung are also expanding production capacities, reflecting a global consensus on self-reliance and supply chain diversification. Asian governments are similarly investing in semiconductor manufacturing to address shortages and enhance their global market presence.

Japan is providing generous government subsidies and incentives to attract major foreign chipmakers such as TSMC, Samsung, and Micron to invest and build advanced semiconductor plants in the country. Subsidies have helped to bring greenfield investments in Japan’s chip sector in recent years. TSMC alone is investing over USD 20 billion to build two cutting-edge plants in Kumamoto by 2027. The government has earmarked around USD 13 billion just in this fiscal year to support the semiconductor industry.

Moreover, Japan’s collaboration with the US and the establishment of Rapidus, a memory chip firm, backed by major corporations, further show its ambitions to revitalise its semiconductor industry. Japan is also looking into advancements in semiconductor materials like silicon carbide (SiC) and gallium nitride (GaN) – crucial for powering electric vehicles, renewable energy systems, and 5G technology.

South Korea. While Taiwan holds the lead in semiconductor manufacturing volume, South Korea dominates the memory chip sector, largely due to Samsung. The country is also spending USD 470 billion over the next 23 years to build the world’s largest semiconductor “mega cluster” covering 21,000 hectares in Gyeonggi Province near Seoul. The ambitious project, a partnership with Samsung and SK Hynix, will centralise and boost self-sufficiency in chip materials and components to 50% by 2030. The mega cluster is South Korea’s bold plan to cement its position as a global semiconductor leader and reduce dependence on the US amidst growing geopolitical tensions.

Vietnam. Vietnam is actively positioning itself to become a major player in the global semiconductor supply chain amid the push to diversify away from China. The Southeast Asian nation is offering tax incentives, investing in training tens of thousands of semiconductor engineers, and encouraging major chip firms like Samsung, Nvidia, and Amkor to set up production facilities and design centres. However, Vietnam faces challenges such as a limited pool of skilled labour, outdated energy infrastructure leading to power shortages in key manufacturing hubs, and competition from other regional players like Taiwan and Singapore that are also vying for semiconductor investments.

The Potential of SLMs in Addressing Infrastructure Challenges

Small language models (SLMs) offer reduced computational requirements compared to larger models, potentially alleviating strain on semiconductor supply chains by deploying on smaller, specialised hardware.

Innovative SLMs like Google’s Gemini Nano and Mistral AI’s Mixtral 8x7B enhance efficiency, running on modest hardware, unlike their larger counterparts. Gemini Nano is integrated into Bard and available on Pixel 8 smartphones, while Mixtral 8x7B supports multiple languages and suits tasks like classification and customer support.

The shift towards smaller AI models can be pivotal to the AI landscape, democratising AI and ensuring accessibility and sustainability. While they may not be able to handle complex tasks as well as LLMs yet, the ability of SLMs to balance model size, compute power, and ethical considerations will shape the future of AI development.

Over the past year, many organisations have explored Generative AI and LLMs, with some successfully identifying, piloting, and integrating suitable use cases. As business leaders push tech teams to implement additional use cases, the repercussions on their roles will become more pronounced. Embracing GenAI will require a mindset reorientation, and tech leaders will see substantial impact across various ‘traditional’ domains.

AIOps and GenAI Synergy: Shaping the Future of IT Operations

When discussing AIOps adoption, there are commonly two responses: “Show me what you’ve got” or “We already have a team of Data Scientists building models”. The former usually demonstrates executive sponsorship without a specific business case, resulting in a lukewarm response to many pre-built AIOps solutions due to their lack of a defined business problem. On the other hand, organisations with dedicated Data Scientist teams face a different challenge. While these teams can create impressive models, they often face pushback from the business as the solutions may not often address operational or business needs. The challenge arises from Data Scientists’ limited understanding of the data, hindering the development of use cases that effectively align with business needs.

The most effective approach lies in adopting an AIOps Framework. Incorporating GenAI into AIOps frameworks can enhance their effectiveness, enabling improved automation, intelligent decision-making, and streamlined operational processes within IT operations.

This allows active business involvement in defining and validating use-cases, while enabling Data Scientists to focus on model building. It bridges the gap between technical expertise and business requirements, ensuring AIOps initiatives are influenced by the capabilities of GenAI, address specific operational challenges and resonate with the organisation’s goals.

The Next Frontier of IT Infrastructure

Many companies adopting GenAI are openly evaluating public cloud-based solutions like ChatGPT or Microsoft Copilot against on-premises alternatives, grappling with the trade-offs between scalability and convenience versus control and data security.

Cloud-based GenAI offers easy access to computing resources without substantial upfront investments. However, companies face challenges in relinquishing control over training data, potentially leading to inaccurate results or “AI hallucinations,” and concerns about exposing confidential data. On-premises GenAI solutions provide greater control, customisation, and enhanced data security, ensuring data privacy, but require significant hardware investments due to unexpectedly high GPU demands during both the training and inferencing stages of AI models.

Hardware companies are focusing on innovating and enhancing their offerings to meet the increasing demands of GenAI. The evolution and availability of powerful and scalable GPU-centric hardware solutions are essential for organisations to effectively adopt on-premises deployments, enabling them to access the necessary computational resources to fully unleash the potential of GenAI. Collaboration between hardware development and AI innovation is crucial for maximising the benefits of GenAI and ensuring that the hardware infrastructure can adequately support the computational demands required for widespread adoption across diverse industries. Innovations in hardware architecture, such as neuromorphic computing and quantum computing, hold promise in addressing the complex computing requirements of advanced AI models.

The synchronisation between hardware innovation and GenAI demands will require technology leaders to re-skill themselves on what they have done for years – infrastructure management.

The Rise of Event-Driven Designs in IT Architecture

IT leaders traditionally relied on three-tier architectures – presentation for user interface, application for logic and processing, and data for storage. Despite their structured approach, these architectures often lacked scalability and real-time responsiveness. The advent of microservices, containerisation, and serverless computing facilitated event-driven designs, enabling dynamic responses to real-time events, and enhancing agility and scalability. Event-driven designs, are a paradigm shift away from traditional approaches, decoupling components and using events as a central communication mechanism. User actions, system notifications, or data updates trigger actions across distributed services, adding flexibility to the system.

However, adopting event-driven designs presents challenges, particularly in higher transaction-driven workloads where the speed of serverless function calls can significantly impact architectural design. While serverless computing offers scalability and flexibility, the latency introduced by initiating and executing serverless functions may pose challenges for systems that demand rapid, real-time responses. Increasing reliance on event-driven architectures underscores the need for advancements in hardware and compute power. Transitioning from legacy architectures can also be complex and may require a phased approach, with cultural shifts demanding adjustments and comprehensive training initiatives.

The shift to event-driven designs challenges IT Architects, whose traditional roles involved designing, planning, and overseeing complex systems. With Gen AI and automation enhancing design tasks, Architects will need to transition to more strategic and visionary roles. Gen AI showcases capabilities in pattern recognition, predictive analytics, and automated decision-making, promoting a symbiotic relationship with human expertise. This evolution doesn’t replace Architects but signifies a shift toward collaboration with AI-driven insights.

IT Architects need to evolve their skill set, blending technical expertise with strategic thinking and collaboration. This changing role will drive innovation, creating resilient, scalable, and responsive systems to meet the dynamic demands of the digital age.

Whether your organisation is evaluating or implementing GenAI, the need to upskill your tech team remains imperative. The evolution of AI technologies has disrupted the tech industry, impacting people in tech. Now is the opportune moment to acquire new skills and adapt tech roles to leverage the potential of GenAI rather than being disrupted by it.

It’s been barely one year since we entered the Generative AI Age. On November 30, 2022, OpenAI launched ChatGPT, with no fanfare or promotion. Since then, Generative AI has become arguably the most talked-about tech topic, both in terms of opportunities it may bring and risks that it may carry.

The landslide success of ChatGPT and other Generative AI applications with consumers and businesses has put a renewed and strengthened focus on the potential risks associated with the technology – and how best to regulate and manage these. Government bodies and agencies have created voluntary guidelines for the use of AI for a number of years now (the Singapore Framework, for example, was launched in 2019).

There is no active legislation on the development and use of AI yet. Crucially, however, a number of such initiatives are currently on their way through legislative processes globally.

EU’s Landmark AI Act: A Step Towards Global AI Regulation

The European Union’s “Artificial Intelligence Act” is a leading example. The European Commission (EC) started examining AI legislation in 2020 with a focus on

- Protecting consumers

- Safeguarding fundamental rights, and

- Avoiding unlawful discrimination or bias

The EC published an initial legislative proposal in 2021, and the European Parliament adopted a revised version as their official position on AI in June 2023, moving the legislation process to its final phase.

This proposed EU AI Act takes a risk management approach to regulating AI. Organisations looking to employ AI must take note: an internal risk management approach to deploying AI would essentially be mandated by the Act. It is likely that other legislative initiatives will follow a similar approach, making the AI Act a potential role model for global legislations (following the trail blazed by the General Data Protection Regulation). The “G7 Hiroshima AI Process”, established at the G7 summit in Japan in May 2023, is a key example of international discussion and collaboration on the topic (with a focus on Generative AI).

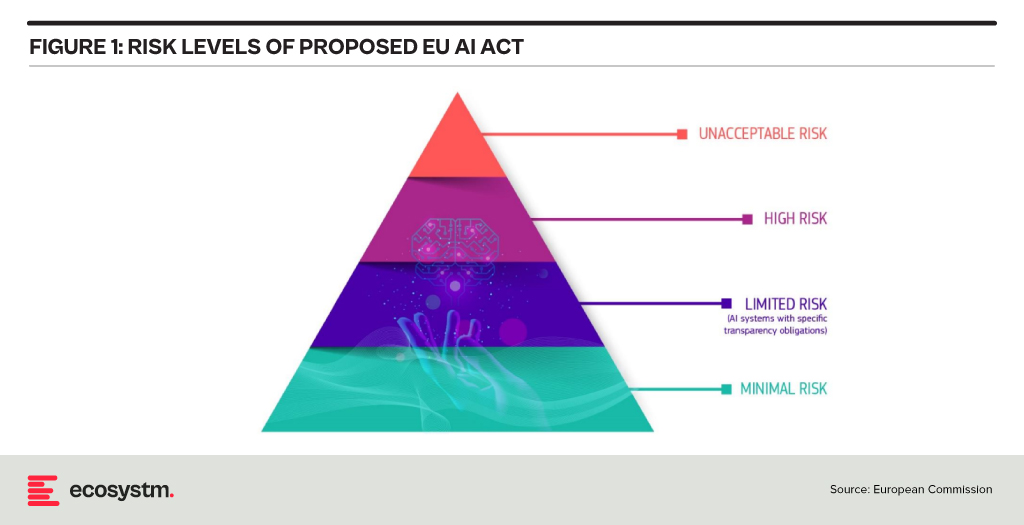

Risk Classification and Regulations in the EU AI Act

At the heart of the AI Act is a system to assess the risk level of AI technology, classify the technology (or its use case), and prescribe appropriate regulations to each risk class.

For each of these four risk levels, the AI Act proposes a set of rules and regulations. Evidently, the regulatory focus is on High-Risk AI systems.

Contrasting Approaches: EU AI Act vs. UK’s Pro-Innovation Regulatory Approach

The AI Act has received its share of criticism, and somewhat different approaches are being considered, notably in the UK. One set of criticism revolves around the lack of clarity and vagueness of concepts (particularly around person-related data and systems). Another set of criticism revolves around the strong focus on the protection of rights and individuals and highlights the potential negative economic impact for EU organisations looking to leverage AI, and for EU tech companies developing AI systems.

A white paper by the UK government published in March 2023, perhaps tellingly, named “A pro-innovation approach to AI regulation” emphasises on a “pragmatic, proportionate regulatory approach … to provide a clear, pro-innovation regulatory environment”, The paper talks about an approach aiming to balance the protection of individuals with economic advancements for the UK on its way to become an “AI superpower”.

Further aspects of the EU AI Act are currently being critically discussed. For example, the current text exempts all open-source AI components not part of a medium or higher risk system from regulation but lacks definition and considerations for proliferation.

Adopting AI Risk Management in Organisations: The Singapore Approach

Regardless of how exactly AI regulations will turn out around the world, organisations must start today to adopt AI risk management practices. There is an added complexity: while the EU AI Act does clearly identify high-risk AI systems and example use cases, the realisation of regulatory practices must be tackled with an industry-focused approach.

The approach taken by the Monetary Authority of Singapore (MAS) is a primary example of an industry-focused approach to AI risk management. The Veritas Consortium, led by MAS, is a public-private-tech partnership consortium aiming to guide the financial services sector on the responsible use of AI. As there is no AI legislation in Singapore to date, the consortium currently builds on Singapore’s aforementioned “Model Artificial Intelligence Governance Framework”. Additional initiatives are already underway to focus specifically on Generative AI for financial services, and to build a globally aligned framework.

To Comply with Upcoming AI Regulations, Risk Management is the Path Forward

As AI regulation initiatives move from voluntary recommendation to legislation globally, a risk management approach is at the core of all of them. Adding risk management capabilities for AI is the path forward for organisations looking to deploy AI-enhanced solutions and applications. As that task can be daunting, an industry consortium approach can help circumnavigate challenges and align on implementation and realisation strategies for AI risk management across the industry. Until AI legislations are in place, such industry consortia can chart the way for their industry – organisations should seek to participate now to gain a head start with AI.

Generative AI is seeing enterprise interest and early adoption enhancing efficiency, fostering innovation, and pushing the boundaries of possibility. It has the potential of reshaping industries – and fast!

However, alongside its immense potential, Generative AI also raises concerns. Ethical considerations surrounding data privacy and security come to the forefront, as powerful AI systems handle vast amounts of sensitive information.

Addressing these concerns through responsible AI development and thoughtful regulation will be crucial to harnessing the full transformative power of Generative AI.

Read on to find out the key challenges faced in implementing Generative AI and explore emerging use cases in industries such as Financial Services, Retail, Manufacturing, and Healthcare.

Download ‘Generative AI: Industry Adoption’ as a PDF

I have spent many years analysing the mobile and end-user computing markets. Going all the way back to 1995 where I was part of a Desktop PC research team, to running the European wireless and mobile comms practice, to my time at 3 Mobile in Australia and many years after, helping clients with their end-user computing strategies. From the birth of mobile data services (GPRS, WAP, and so on to 3G, 4G and 5G), from simple phones to powerful foldable devices, from desktop computers to a complex array of mobile computing devices to meet the many and varied employee needs. I am always looking for the “next big thing” – and there have been some significant milestones – Palm devices, Blackberries, the iPhone, Android, foldables, wearables, smaller, thinner, faster, more powerful laptops.

But over the past few years, innovation in this space has tailed off. Outside of the foldable space (which is already four years old), the major benefits of new devices are faster processors, brighter screens, and better cameras. I review a lot of great computers too (like many of the recent Surface devices) – and while they are continuously improving, not much has got my clients or me “excited” over the past few years (outside of some of the very cool accessibility initiatives).

The Force of AI

But this is all about to change. Devices are going to get smarter based on their data ecosystem, the cloud, and AI-specific local processing power. To be honest, this has been happening for some time – but most of the “magic” has been invisible to us. It happened when cameras took multiple shots and selected the best one; it happened when pixels were sharpened and images got brighter, better, and more attractive; it happened when digital assistants were called upon to answer questions and provide context.

Microsoft, among others, are about to make AI smarts more front and centre of the experience – Windows Copilot will add a smart assistant that can not only advise but execute on advice. It will help employees improve their focus and productivity, summarise documents and long chat threads, select music, distribute content to the right audience, and find connections. Added to Microsoft 365 Copilot it will help knowledge workers spend less time searching and reading – and more time doing and improving.

The greater integration of public and personal data with “intent insights” will also play out on our mobile devices. We are likely to see the emergence of the much-promised “integrated app”– one that can take on many of the tasks that we currently undertake across multiple applications, mobile websites, and sometimes even multiple devices. This will initially be through the use of public LLMs like Bard and ChatGPT, but as more custom, private models emerge they will serve very specific functions.

Focused AI Chips will Drive New Device Wars

In parallel to these developments, we expect the emergence of very specific AI processors that are paired to very specific AI capabilities. As local processing power becomes a necessity for some AI algorithms, the broad CPUs – and even the AI-focused ones (like Google’s Tensor Processor) – will need to be complemented by specific chips that serve specific AI functions. These chips will perform the processing more efficiently – preserving the battery and improving the user experience.

While this will be a longer-term trend, it is likely to significantly change the game for what can be achieved locally on a device – enabling capabilities that are not in the realm of imagination today. They will also spur a new wave of device competition and innovation – with a greater desire to be on the “latest and greatest” devices than we see today!

So, while the levels of device innovation have flattened, AI-driven software and chipset innovation will see current and future devices enable new levels of employee productivity and consumer capability. The focus in 2023 and beyond needs to be less on the hardware announcements and more on the platforms and tools. End-user computing strategies need to be refreshed with a new perspective around intent and intelligence. The persona-based strategies of the past have to be changed in a world where form factors and processing power are less relevant than outcomes and insights.

After the resounding success of the inaugural event last year, Ecosystm is once again partnering with Elevandi and the State Secretariat for International Finance SIF as a knowledge partner for the Point Zero Forum 2023. In this Ecosystm Insights, our guest author Jaskaran Bhalla, Content Lead, Elevandi talks about the Point Zero Forum 2023 and how it is all set to explore digital assets, sustainability, and AI in an ever-evolving Financial Services landscape.

The Point Zero Forum is returning for its second edition between 26 to 28 June 2023 in Zurich, Switzerland. The inaugural Forum held in June 2022 attracted over 1,000 leaders and featured more than 200 esteemed speakers from Europe, Asia Pacific, the USA, and MENA. The Forum represents a collaboration between the Swiss State Secretariat for International Finance (SIF) and Elevandi and is organised in cooperation with the BIS Innovation Hub, the Monetary Authority of Singapore (MAS), and the Swiss National Bank.

As we gear up for this year’s Point Zero Forum, let’s take a moment to reflect on some of the pivotal developments that have shaped the Financial Services industry since the previous Forum and also moulded the three key themes that will take centre stage this year: Sustainability, Artificial Intelligence (AI), and Digital Assets.

COP27, the rise of blended finance and the groundbreaking Net-Zero Public Data Utility

In November 2022, the Government of the Arab Republic of Egypt hosted the 27th session of the Conference of the Parties of the UNFCCC (COP27), with a view to accelerate the transition to a low-carbon future. In the build-up to COP27, Ravi Menon, the Managing Director of the MAS spoke at the inaugural Transition Finance towards Net-Zero conference and shared with the audience that the world is currently not on a trajectory to achieve net-zero emissions by 2050. And according to the UN Emissions Gap report 2021, based on the current policies in place, the world is 55% short of the emissions reduction target for 2030. He also elaborated on the significant role that blended finance can play in tackling climate change, a theme that widely resonated with the global leaders at COP27. To enable easy and transparent reporting on climate commitments, the Climate Data Steering Committee (CDSC) outlined the next steps on its recommended plans for the Net-Zero Data Public Utility (NZDPU) at COP 27. NZDPU aims to aid efforts to transition to a net-zero economy by addressing data gaps, inconsistencies, and barriers to information that slow climate action.

The Point Zero Forum 2023 will deep-dive into the data, technologies, and capital and risk management solutions that can accelerate the fair transition towards a low-carbon future.

Panel Discussion Highlight: The opening panel discussion, “Data for Net-Zero: Views from the Climate Data Steering Committee,” scheduled for 26 June, will feature members of the CDSC, which include the Financial Conduct Authority, the MAS, Glasgow Financial Alliance for Net Zero (GFANZ), and the Swiss State Secretariat for International Finance. The panel will discuss the role of new technologies and collaborative platforms in promoting greater accessibility of transition data and innovative business models.

The launch of ChatGPT by OpenAI and its record for the fastest 100M monthly active users

The launch of ChatGPT by OpenAI on 30 November, 2022 led to widespread adoption by users globally – eventually setting the record for the fastest-growing, active users, hitting 100M monthly active users by Feb 2023. While on one hand users rushed to share enormous efficiency gains achieved by the use of ChatGPT, on the other hand ChatGPT soon became a disruptive tool to spread fake news.

The Point Zero Forum 2023 will deep-dive into Generative AI’s potential for enhancing efficiency, improving risk management, and providing better customer experience in the Financial Services industry, while highlighting the need for ensuring fair, ethical, accountable, and transparent use of these technologies.

Panel Discussion Highlight: The session “Breaking New Ground with Generative AI: Project MindForge”, scheduled for 27 June, will feature global leaders from NVIDIA, the MAS, Citigroup and Bloomberg. The panel will discuss the opportunities of Generative AI for the Financial Services sector.

MiCA regulation gets adopted by the EU lawmakers and sets a precedent for digital asset regulations

More than 2.5 years after it was first proposed, the EU Markets in Crypto-Assets (MiCA) regulation was approved in April 2023 by EU Parliament. While there is still work to be done to implement MiCA and measure its success, and to answer open questions around regulation for out-of-scope assets (like DeFI and NFTs), the digital assets industry is keenly observing whether MiCA could serve as a template for global crypto regulation. In May 2023, the International Organization Of Securities Commissions (IOSCO), the global standard setter for securities markets, also joined the global discussion on digital asset regulation by issuing for consultation detailed recommendations to jurisdictions across the globe as to how to regulate crypto assets.

The Point Zero Forum 2023 will do a stocktake on key global regulatory frameworks, market infrastructure, and use cases for the widespread adoption of digital assets, asset tokenisation, and distributed ledger technology.

Panel Discussion Highlight: The sessions “State of Global Digital Asset Regulation: Navigating Opportunities in an Evolving Landscape” and “Interoperability and Regulatory Compliance: Building the Future of Digital Asset Infrastructure”, scheduled on 26 and 27 June respectively, will feature global leaders from both public sector (such as the MAS, Bank of Italy, Bank of Thailand, U.S. Commodity Futures Trading Commission, EU Parliament) and private sector organisations (such as JP Morgan, Sygnum, SBI Digital Assets, Chainalysis, GBBC, SIX Digital Exchange). The discussions will centre around digital asset regulations and key considerations in the rapidly evolving world of digital assets.

Register here at https://www.pointzeroforum.com/registration. Receive 10% off the Industry Pass by entering the code ‘JB10’ at check out. (Policymakers, regulators, think tanks, and academics receive complimentary access/ Founders of tech companies (incorporated for less than 3 years) can apply for a discounted Founder’s Pass)

It is not hyperbole to state that AI is on the cusp of having significant implications on society, business, economies, governments, individuals, cultures, politics, the arts, manufacturing, customer experience… I think you get the idea! We cannot understate the impact that AI will have on society. In times gone by, businesses tested ideas, new products, or services with small customer segments before they went live. But with AI we are all part of this experiment on the impacts of AI on society – its benefits, use cases, weaknesses, and threats.

What seemed preposterous just six months ago is not only possible but EASY! Do you want a virtual version of yourself, a friend, your CEO, or your deceased family member? Sure – just feed the data. Will succession planning be more about recording all conversations and interactions with an executive so their avatar can make the decisions when they leave? Why not? How about you turn the thousands of hours of recorded customer conversations with your contact centre team into a virtual contact centre team? Your head of product can present in multiple countries in multiple languages, tailored to the customer segments, industries, geographies, or business needs at the same moment.

AI has the potential to create digital clones of your employees, it can spread fake news as easily as real news, it can be used for deception as easily as for benefit. Is your organisation prepared for the social, personal, cultural, and emotional impacts of AI? Do you know how AI will evolve in your organisation?

When we focus on the future of AI, we often interview AI leaders, business leaders, futurists, and analysts. I haven’t seen enough focus on psychologists, sociologists, historians, academics, counselors, or even regulators! The Internet and social media changed the world more than we ever imagined – at this stage, it looks like these two were just a rehearsal for the real show – Artificial Intelligence.

Lack of Government or Industry Regulation Means You Need to Self-Regulate

These rapid developments – and the notable silence from governments, lawmakers, and regulators – make the requirement for an AI Ethics Policy for your organisation urgent! Even if you have one, it probably needs updating, as the scenarios that AI can operate within are growing and changing literally every day.

- For example, your customer service team might want to create a virtual customer service agent from a real person. What is the policy on this? How will it impact the person?

- Your marketing team might be using ChatGPT or Bard for content creation. Do you have a policy specifically for the creation and use of content using assets your business does not own?

- What data is acceptable to be ingested by a public Large Language Model (LLM). Are are you governing data at creation and publishing to ensure these policies are met?

- With the impending public launch of Microsoft’s Co-Pilot AI service, what data can be ingested by Co-Pilot? How are you governing the distribution of the insights that come out of that capability?

If policies are not put in place, data tagged, staff trained, before using a tool such as Co-Pilot, your business will be likely to break some privacy or employment laws – on the very first day!

What do the LLMs Say About AI Ethics Policies?

So where do you go when looking for an AI Ethics policy? ChatGPT and Bard of course! I asked the two for a modern AI Ethics policy.

You can read what they generated in the graphic below.

I personally prefer the ChatGPT4 version as it is more prescriptive. At the same time, I would argue that MOST of the AI tools that your business has access to today don’t meet all of these principles. And while they are tools and the ethics should dictate the way the tools are used, with AI you cannot always separate the process and outcome from the tool.

For example, a tool that is inherently designed to learn an employee’s character, style, or mannerisms cannot be unbiased if it is based on a biased opinion (and humans have biases!).

LLMs take data, content, and insights created by others, and give it to their customers to reuse. Are you happy with your website being used as a tool to train a startup on the opportunities in the markets and customers you serve?

By making content public, you acknowledge the risk of others using it. But at least they visited your website or app to consume it. Not anymore…

A Policy is Useless if it Sits on a Shelf

Your AI ethics policy needs to be more than a published document. It should be the beginning of a conversation across the entire organisation about the use of AI. Your employees need to be trained in the policy. It needs to be part of the culture of the business – particularly as low and no-code capabilities push these AI tools, practices, and capabilities into the hands of many of your employees.

Nearly every business leader I interview mentions that their organisation is an “intelligent, data-led, business.” What is the role of AI in driving this intelligent business? If being data-driven and analytical is in the DNA of your organisation, soon AI will also be at the heart of your business. You might think you can delay your investments to get it right – but your competitors may be ahead of you.

So, as you jump head-first into the AI pool, start to create, improve and/or socialise your AI Ethics Policy. It should guide your investments, protect your brand, empower your employees, and keep your business resilient and compliant with legacy and new legislation and regulations.