The Asia Pacific region is rapidly emerging as a global economic powerhouse, with AI playing a key role in driving this growth. The AI market in the region is projected to reach USD 244B by 2025, and organisations must adapt and scale AI effectively to thrive. The question is no longer whether to adopt AI, but how to do so responsibly and effectively for long-term success.

The APAC AI Outlook 2025 highlights how Asia Pacific enterprises are moving beyond experimentation to maximise the impact of their AI investments.

Here are 5 key trends that will impact the AI landscape in 2025.

Click here to download “The Future of AI-Powered Business: 5 Trends to Watch” as a PDF.

1. Strategic AI Deployment

AI is no longer a buzzword, but Asia Pacific’s transformation engine. It’s reshaping industries and fuelling growth. Initially, high costs and complex ROI pushed leaders toward quick wins. Now, the game has changed. As AI adoption matures, the focus is shifting from short-term gains to long-term, innovation-driven strategies.

GenAI is is at the heart of this shift, moving beyond the periphery to power core business functions and deliver competitive advantage.

Organisations are rethinking AI investments, looking beyond pure financials to consider the impact on jobs, governance, and data readiness. The AI journey is about balancing ambition with practicality.

2. Optimising AI: Tailored Open-Source Models

Smaller, open-source, and specialised AI models will gain momentum as organisations seek efficiency, flexibility, and sustainability in their AI strategies.

Unlike LLMs, which require high computational power, smaller, task-specific models offer comparable performance while being more resource-efficient. This makes them ideal for organisations working with proprietary data or limited computational resources.

Beyond cost and performance, these models are more energy-efficient, addressing growing concerns about AI’s environmental impact.

3. Centralised Tools for Responsible Innovation

Navigating the increasingly complex AI landscape demands unified management and governance. Organisations will prioritise centralised frameworks to tame the chaos of diverse AI solutions, ensuring compliance (think EU AI Act) while boosting transparency and security.

Automated AI lifecycle management tools will streamline oversight, providing real-time tracking of model performance, usage, and issues like drift.

By using flexible developer toolkits and vendor-agnostic strategies, organisations can accelerate innovation while maintaining adaptability, as the technology evolves.

4. Supercharging Workflows With Agentic AI

Organisations will embrace Agentic AI to automate complex workflows and drive business value. Traditional automation tools struggle with real-world dynamism, but AI-powered agents offer a flexible solution. They empower autonomous task execution, intelligent decision-making, and adaptability to changing circumstances.

These agents, often using GenAI, understand complex instructions and learn from experience. They collaborate with humans, boosting efficiency, and adapt to disruptions, unlike rigid traditional automation.

Agentic workflows are key to redefining work, enabling agility and innovation.

5. From Productivity to People

The focus of AI conversations will shift from simply boosting productivity to using AI for human-centric innovation that transforms both employee roles and customer experiences.

For employees, AI will handle routine tasks, enabling them to focus on creativity and innovation. Education and training will be crucial for a smooth transition to AI-powered workflows.

For customers, AI is evolving to offer more empathetic, personalised interactions by understanding individual emotions, motivations, and preferences. Organisations are recognising the need for transparent, explainable AI to build trust, tailor solutions, and deepen engagement.

Hit or miss AI experiments have leaders demanding results. In this breakneck AI landscape, strategy and realism are your survival tools. A pragmatic approach? High-impact, achievable goals. Know your capabilities, prioritise manageable projects, and stay flexible. The AI winners will be those who champion human-AI collaboration, bake in ethics, and never stop researching.

AI has broken free from the IT department. It’s no longer a futuristic concept but a present-day reality transforming every facet of business. Departments across the enterprise are now empowered to harness AI directly, fuelling innovation and efficiency without waiting for IT’s stamp of approval. The result? A more agile, data-driven organisation where AI unlocks value and drives competitive advantage.

Ecosystm’s research over the past two years, including surveys and in-depth conversations with business and technology leaders, confirms this trend: AI is the dominant theme. And while the potential is clear, the journey is just beginning.

Here are key AI insights for HR Leaders from our research.

Click here to download “AI Stakeholders: The HR Perspective” as a PDF.

HR: Leading the Charge (or Should Be)

Our research reveals a fascinating dynamic in HR. While 54% of HR leaders currently use AI for recruitment (scanning resumes, etc.), their vision extends far beyond. A striking majority plan to expand AI’s reach into crucial areas: 74% for workforce planning, 68% for talent development and training, and 62% for streamlining employee onboarding.

The impact is tangible, with organisations already seeing significant benefits. GenAI has streamlined presentation creation for bank employees, allowing them to focus on content rather than formatting and improving efficiency. Integrating GenAI into knowledge bases has simplified access to internal information, making it quicker and easier for employees to find answers. AI-driven recruitment screening is accelerating hiring in the insurance sector by analysing resumes and applications to identify top candidates efficiently. Meanwhile, AI-powered workforce management systems are transforming field worker management by optimising job assignments, enabling real-time tracking, and ensuring quick responses to changes.

The Roadblocks and the Opportunity

Despite this promising outlook, HR leaders face significant hurdles. Limited exploration of use cases, the absence of a unified organisational AI strategy, and ethical concerns are among the key barriers to wider AI deployments.

Perhaps most concerning is the limited role HR plays in shaping AI strategy. While 57% of tech and business leaders cite increased productivity as the main driver for AI investments, HR’s influence is surprisingly weak. Only 20% of HR leaders define AI use cases, manage implementation, or are involved in governance and ownership. A mere 8% primarily manage AI solutions.

This disconnect represents a massive opportunity.

2025 and Beyond: A Call to Action for HR

Despite these challenges, our research indicates HR leaders are prioritising AI for 2025. Increased productivity is the top expected outcome, while three in ten will focus on identifying better HR use cases as part of a broader data-centric approach.

The message is clear: HR needs to step up and claim its seat at the AI table. By proactively defining use cases, championing ethical considerations, and collaborating closely with tech teams, HR can transform itself into a strategic driver of AI adoption, unlocking the full potential of this transformative technology for the entire organisation. The future of HR is intelligent, and it’s time for HR leaders to embrace it.

The White House has mandated federal agencies to conduct risk assessments on AI tools and appoint officers, including Chief Artificial Intelligence Officers (CAIOs), for oversight. This directive, led by the Office of Management and Budget (OMB), aims to modernise government AI adoption and promote responsible use. Agencies must integrate AI oversight into their core functions, ensuring safety, security, and ethical use. CAIOs will be tasked with assessing AI’s impact on civil rights and market competition. Agencies have until December 1, 2024, to address non-compliant AI uses, emphasising swift implementation.

How will this impact global AI adoption? Ecosystm analysts share their views.

Click here to download ‘Ensuring Ethical AI: US Federal Agencies’ New Mandate’ as a PDF.

The Larger Impact: Setting a Global Benchmark

This sets a potential global benchmark for AI governance, with the U.S. leading the way in responsible AI use, inspiring other nations to follow suit. The emphasis on transparency and accountability could boost public trust in AI applications worldwide.

The appointment of CAIOs across U.S. federal agencies marks a significant shift towards ethical AI development and application. Through mandated risk management practices, such as independent evaluations and real-world testing, the government recognises AI’s profound impact on rights, safety, and societal norms.

This isn’t merely a regulatory action; it’s a foundational shift towards embedding ethical and responsible AI at the heart of government operations. The balance struck between fostering innovation and ensuring public safety and rights protection is particularly noteworthy.

This initiative reflects a deep understanding of AI’s dual-edged nature – the potential to significantly benefit society, countered by its risks.

The Larger Impact: Blueprint for Risk Management

In what is likely a world first, AI brings together technology, legal, and policy leaders in a concerted effort to put guardrails around a new technology before a major disaster materialises. These efforts span from technology firms providing a form of legal assurance for use of their products (for example Microsoft’s Customer Copyright Commitment) to parliaments ratifying AI regulatory laws (such as the EU AI Act) to the current directive of installing AI accountability in US federal agencies just in the past few months.

It is universally accepted that AI needs risk management to be responsible and acceptable – installing an accountable C-suite role is another major step of AI risk mitigation.

This is an interesting move for three reasons:

- The balance of innovation versus governance and risk management.

- Accountability mandates for each agency’s use of AI in a public and transparent manner.

- Transparency mandates regarding AI use cases and technologies, including those that may impact safety or rights.

Impact on the Private Sector: Greater Accountability

AI Governance is one of the rare occasions where government action moves faster than private sector. While the immediate pressure is now on US federal agencies (and there are 438 of them) to identify and appoint CAIOs, the announcement sends a clear signal to the private sector.

Following hot on the heels of recent AI legislation steps, it puts AI governance straight into the Boardroom. The air is getting very thin for enterprises still in denial that AI governance has advanced to strategic importance. And unlike the CFC ban in the Eighties (the Montreal protocol likely set the record for concerted global action) this time the technology providers are fully onboard.

There’s no excuse for delaying the acceleration of AI governance and establishing accountability for AI within organisations.

Impact on Tech Providers: More Engagement Opportunities

Technology vendors are poised to benefit from the medium to long-term acceleration of AI investment, especially those based in the U.S., given government agencies’ preferences for local sourcing.

In the short term, our advice to technology vendors and service partners is to actively engage with CAIOs in client agencies to identify existing AI usage in their tools and platforms, as well as algorithms implemented by consultants and service partners.

Once AI guardrails are established within agencies, tech providers and service partners can expedite investments by determining which of their platforms, tools, or capabilities comply with specific guardrails and which do not.

Impact on SE Asia: Promoting a Digital Innovation Hub

By 2030, Southeast Asia is poised to emerge as the world’s fourth-largest economy – much of that growth will be propelled by the adoption of AI and other emerging technologies.

The projected economic growth presents both challenges and opportunities, emphasizing the urgency for regional nations to enhance their AI governance frameworks and stay competitive with international standards. This initiative highlights the critical role of AI integration for private sector businesses in Southeast Asia, urging organizations to proactively address AI’s regulatory and ethical complexities. Furthermore, it has the potential to stimulate cross-border collaborations in AI governance and innovation, bridging the U.S., Southeast Asian nations, and the private sector.

It underscores the global interconnectedness of AI policy and its impact on regional economies and business practices.

By leading with a strategic approach to AI, the U.S. sets an example for Southeast Asia and the global business community to reevaluate their AI strategies, fostering a more unified and responsible global AI ecosystem.

The Risks

U.S. government agencies face the challenge of sourcing experts in technology, legal frameworks, risk management, privacy regulations, civil rights, and security, while also identifying ongoing AI initiatives. Establishing a unified definition of AI and cataloguing processes involving ML, algorithms, or GenAI is essential, given AI’s integral role in organisational processes over the past two decades.

However, there’s a risk that focusing on AI governance may hinder adoption.

The role should prioritise establishing AI guardrails to expedite compliant initiatives while flagging those needing oversight. While these guardrails will facilitate “safe AI” investments, the documentation process could potentially delay progress.

The initiative also echoes a 20th-century mindset for a 21st-century dilemma. Hiring leaders and forming teams feel like a traditional approach. Today, organisations can increase productivity by considering AI and automation as initial solutions. Investing more time upfront to discover initiatives, set guardrails, and implement AI decision-making processes could significantly improve CAIO effectiveness from the outset.

As AI evolves rapidly, the emergence of GenAI technologies such as GPT models has sparked a novel and critical role: prompt engineering. This specialised function is becoming indispensable in optimising the interaction between humans and AI, serving as a bridge that translates human intentions into prompts that guide AI to produce desired outcomes. In this Ecosystm Insight, I will explore the importance of prompt engineering, highlighting its significance, responsibilities, and the impact it has on harnessing AI’s full potential.

Understanding Prompt Engineering

Prompt engineering is an interdisciplinary role that combines elements of linguistics, psychology, computer science, and creative writing. It involves crafting inputs (prompts) that are specifically designed to elicit the most accurate, relevant, and contextually appropriate responses from AI models. This process requires a nuanced understanding of how different models process information, as well as creativity and strategic thinking to manipulate these inputs for optimal results.

As GenAI applications become more integrated across sectors – ranging from creative industries to technical fields – the ability to effectively communicate with AI systems has become a cornerstone of leveraging AI capabilities. Prompt engineers play a crucial role in this scenario, refining the way we interact with AI to enhance productivity, foster innovation, and create solutions that were previously unimaginable.

The Art and Science of Crafting Prompts

Prompt engineering is as much an art as it is a science. It demands a balance between technical understanding of AI models and the creative flair to engage these models in producing novel content. A well-crafted prompt can be the difference between an AI generating generic, irrelevant content and producing work that is insightful, innovative, and tailored to specific needs.

Key responsibilities in prompt engineering include:

- Prompt Optimisation. Fine-tuning prompts to achieve the highest quality output from AI models. This involves understanding the intricacies of model behaviour and leveraging this knowledge to guide the AI towards desired responses.

- Performance Testing and Iteration. Continuously evaluating the effectiveness of different prompts through systematic testing, analysing outcomes, and refining strategies based on empirical data.

- Cross-Functional Collaboration. Engaging with a diverse team of professionals, including data scientists, AI researchers, and domain experts, to ensure that prompts are aligned with project goals and leverage domain-specific knowledge effectively.

- Documentation and Knowledge Sharing. Developing comprehensive guidelines, best practices, and training materials to standardise prompt engineering methodologies within an organisation, facilitating knowledge transfer and consistency in AI interactions.

The Strategic Importance of Prompt Engineering

Effective prompt engineering can significantly enhance the efficiency and outcomes of AI projects. By reducing the need for extensive trial and error, prompt engineers help streamline the development process, saving time and resources. Moreover, their work is vital in mitigating biases and errors in AI-generated content, contributing to the development of responsible and ethical AI solutions.

As AI technologies continue to advance, the role of the prompt engineer will evolve, incorporating new insights from research and practice. The ability to dynamically interact with AI, guiding its creative and analytical processes through precisely engineered prompts, will be a key differentiator in the success of AI applications across industries.

Want to Hire a Prompt Engineer?

Here is a sample job description for a prompt engineer if you think that your organisation will benefit from the role.

Conclusion

Prompt engineering represents a crucial evolution in the field of AI, addressing the gap between human intention and machine-generated output. As we continue to explore the boundaries of what AI can achieve, the demand for skilled prompt engineers – who can navigate the complex interplay between technology and human language – will grow. Their work not only enhances the practical applications of AI but also pushes the frontier of human-machine collaboration, making them indispensable in the modern AI ecosystem.

2023 has been an eventful year. In May, the WHO announced that the pandemic was no longer a global public health emergency. However, other influencers in 2023 will continue to impact the market, well into 2024 and beyond.

Global Conflicts. The Russian invasion of Ukraine persisted; the Israeli-Palestinian conflict escalated into war; African nations continued to see armed conflicts and political crises; there has been significant population displacement.

Banking Crisis. American regional banks collapsed – Silicon Valley Bank and First Republic Bank collapses ranking as the third and second-largest banking collapses in US history; Credit Suisse was acquired by UBS in Switzerland.

Climate Emergency. The UN’s synthesis report found that there’s still a chance to limit global temperature increases by 1.5°C; Loss and Damage conversations continued without a significant impact.

Power of AI. The interest in generative AI models heated up; tech vendors incorporated foundational models in their enterprise offerings – Microsoft Copilot was launched; awareness of AI risks strengthened calls for Ethical/Responsible AI.

Click below to find out what Ecosystm analysts Achim Granzen, Darian Bird, Peter Carr, Sash Mukherjee and Tim Sheedy consider the top 5 tech market forces that will impact organisations in 2024.

Click here to download ‘Ecosystm Predicts: Tech Market Dynamics 2024’ as a PDF

#1 State-sponsored Attacks Will Alter the Nature Of Security Threats

It is becoming clearer that the post-Cold-War era is over, and we are transitioning to a multi-polar world. In this new age, malevolent governments will become increasingly emboldened to carry out cyber and physical attacks without the concern of sanction.

Unlike most malicious actors driven by profit today, state adversaries will be motivated to maximise disruption.

Rather than encrypting valuable data with ransomware, wiper malware will be deployed. State-sponsored attacks against critical infrastructure, such as transportation, energy, and undersea cables will be designed to inflict irreversible damage. The recent 23andme breach is an example of how ethnically directed attacks could be designed to sow fear and distrust. Additionally, even the threat of spyware and phishing will cause some activists, journalists, and politicians to self-censor.

#2 AI Legislation Breaches Will Occur, But Will Go Unpunished

With US President Biden’s recently published “Executive order on Safe, Secure and Trustworthy AI” and the European Union’s “AI Act” set for adoption by the European Parliament in mid-2024, codified and enforceable AI legislation is on the verge of becoming reality. However, oversight structures with powers to enforce the rules are currently not in place for either initiative and will take time to build out.

In 2024, the first instances of AI legislation violations will surface – potentially revealed by whistleblowers or significant public AI failures – but no legal action will be taken yet.

#3 AI Will Increase Net-New Carbon Emissions

In an age focused on reducing carbon and greenhouse gas emissions, AI is contributing to the opposite. Organisations often fail to track these emissions under the broader “Scope 3” category. Researchers at the University of Massachusetts, Amherst, found that training a single AI model can emit over 283T of carbon dioxide, equal to emissions from 62.6 gasoline-powered vehicles in a year.

Organisations rely on cloud providers for carbon emission reduction (Amazon targets net-zero by 2040, and Microsoft and Google aim for 2030, with the trajectory influencing global climate change); yet transparency on AI greenhouse gas emissions is limited. Diverse routes to net-zero will determine the level of greenhouse gas emissions.

Some argue that AI can help in better mapping a path to net-zero, but there is concern about whether the damage caused in the process will outweigh the benefits.

#4 ESG Will Transform into GSE to Become the Future of GRC

Previously viewed as a standalone concept, ESG will be increasingly recognised as integral to Governance, Risk, and Compliance (GRC) practices. The ‘E’ in ESG, representing environmental concerns, is becoming synonymous with compliance due to growing environmental regulations. The ‘S’, or social aspect, is merging with risk management, addressing contemporary issues such as ethical supply chains, workplace equity, and modern slavery, which traditional GRC models often overlook. Governance continues to be a crucial component.

The key to organisational adoption and transformation will be understanding that ESG is not an isolated function but is intricately linked with existing GRC capabilities.

This will present opportunities for GRC and Risk Management providers to adapt their current solutions, already deployed within organisations, to enhance ESG effectiveness. This strategy promises mutual benefits, improving compliance and risk management while simultaneously advancing ESG initiatives.

#5 Productivity Will Dominate Workforce Conversations

The skills discussions have shifted significantly over 2023. At the start of the year, HR leaders were still dealing with the ‘productivity conundrum’ – balancing employee flexibility and productivity in a hybrid work setting. There were also concerns about skills shortage, particularly in IT, as organisations prioritised tech-driven transformation and innovation.

Now, the focus is on assessing the pros and cons (mainly ROI) of providing employees with advanced productivity tools. For example, early studies on Microsoft Copilot showed that 70% of users experienced increased productivity. Discussions, including Narayana Murthy’s remarks on 70-hour work weeks, have re-ignited conversations about employee well-being and the impact of technology in enabling employees to achieve more in less time.

Against the backdrop of skills shortages and the need for better employee experience to retain talent, organisations are increasingly adopting/upgrading their productivity tools – starting with their Sales & Marketing functions.

The challenge of AI is that it is hard to build a business case when the outcomes are inherently uncertain. Unlike a traditional process improvement procedure, there are few guarantees that AI will solve the problem it is meant to solve. Organisations that have been experimenting with AI for some time are aware of this, and have begun to formalise their Proof of Concept (PoC) process to make it easily repeatable by anyone in the organisation who has a use case for AI. PoCs can validate assumptions, demonstrate the feasibility of an idea, and rally stakeholders behind the project.

PoCs are particularly useful at a time when AI is experiencing both heightened visibility and increased scrutiny. Boards, senior management, risk, legal and cybersecurity professionals are all scrutinising AI initiatives more closely to ensure they do not put the organisation at risk of breaking laws and regulations or damaging customer or supplier relationships.

13 Steps to Building an AI PoC

Despite seeming to be lightweight and easy to implement, a good PoC is actually methodologically sound and consistent in its approach. To implement a PoC for AI initiatives, organisations need to:

- Clearly define the problem. Businesses need to understand and clearly articulate the problem they want AI to solve. Is it about improving customer service, automating manual processes, enhancing product recommendations, or predicting machinery failure?

- Set clear objectives. What will success look like for the PoC? Is it about demonstrating technical feasibility, showing business value, or both? Set tangible metrics to evaluate the success of the PoC.

- Limit the scope. PoCs should be time-bound and narrow in scope. Instead of trying to tackle a broad problem, focus on a specific use case or a subset of data.

- Choose the right data. AI is heavily dependent on data. For a PoC, select a representative dataset that’s large enough to provide meaningful results but manageable within the constraints of the PoC.

- Build a multidisciplinary team. Involve team members from IT, data science, business units, and other relevant stakeholders. Their combined perspectives will ensure both technical and business feasibility.

- Prioritise speed over perfection. Use available tools and platforms to expedite the development process. It’s more important to quickly test assumptions than to build a highly polished solution.

- Document assumptions and limitations. Clearly state any assumptions made during the PoC, as well as known limitations. This helps set expectations and can guide future work.

- Present results clearly. Once the PoC is complete, create a clear and concise report or presentation that showcases the results, methodologies, and potential implications for the business.

- Get feedback. Allow stakeholders to provide feedback on the PoC. This includes end-users, technical teams, and business leaders. Their insights will help refine the approach and guide future iterations.

- Plan for the next steps. What actions need to follow a successful PoC demonstration? This might involve a pilot project with a larger scope, integrating the AI solution into existing systems, or scaling the solution across the organisation.

- Assess costs and ROI. Evaluate the costs associated with scaling the solution and compare it with the anticipated ROI. This will be crucial for securing budget and support for further expansion.

- Continually learn and iterate. AI is an evolving field. Use the PoC as a learning experience and be prepared to continually iterate on your solutions as technologies and business needs evolve.

- Consider ethical and social implications. Ensure that the AI initiative respects privacy, reduces bias, and upholds the ethical standards of the organisation. This is critical for building trust and ensuring long-term success.

Customising AI for Your Business

The primary purpose of a PoC is to validate an idea quickly and with minimal risk. It should provide a clear path for decision-makers to either proceed with a more comprehensive implementation or to pivot and explore alternative solutions. It is important for the legal, risk and cybersecurity teams to be aware of the outcomes and support further implementation.

AI initiatives will inevitably drive significant productivity and customer experience improvements – but not every solution will be right for the business. At Ecosystm, we have come across organisations that have employed conversational AI in their contact centres to achieve entirely distinct results – so the AI experience of peers and competitors may not be relevant. A consistent PoC process that trains business and technology teams across the organisation and encourages experimentation at every possible opportunity, would be far more useful.

It’s been barely one year since we entered the Generative AI Age. On November 30, 2022, OpenAI launched ChatGPT, with no fanfare or promotion. Since then, Generative AI has become arguably the most talked-about tech topic, both in terms of opportunities it may bring and risks that it may carry.

The landslide success of ChatGPT and other Generative AI applications with consumers and businesses has put a renewed and strengthened focus on the potential risks associated with the technology – and how best to regulate and manage these. Government bodies and agencies have created voluntary guidelines for the use of AI for a number of years now (the Singapore Framework, for example, was launched in 2019).

There is no active legislation on the development and use of AI yet. Crucially, however, a number of such initiatives are currently on their way through legislative processes globally.

EU’s Landmark AI Act: A Step Towards Global AI Regulation

The European Union’s “Artificial Intelligence Act” is a leading example. The European Commission (EC) started examining AI legislation in 2020 with a focus on

- Protecting consumers

- Safeguarding fundamental rights, and

- Avoiding unlawful discrimination or bias

The EC published an initial legislative proposal in 2021, and the European Parliament adopted a revised version as their official position on AI in June 2023, moving the legislation process to its final phase.

This proposed EU AI Act takes a risk management approach to regulating AI. Organisations looking to employ AI must take note: an internal risk management approach to deploying AI would essentially be mandated by the Act. It is likely that other legislative initiatives will follow a similar approach, making the AI Act a potential role model for global legislations (following the trail blazed by the General Data Protection Regulation). The “G7 Hiroshima AI Process”, established at the G7 summit in Japan in May 2023, is a key example of international discussion and collaboration on the topic (with a focus on Generative AI).

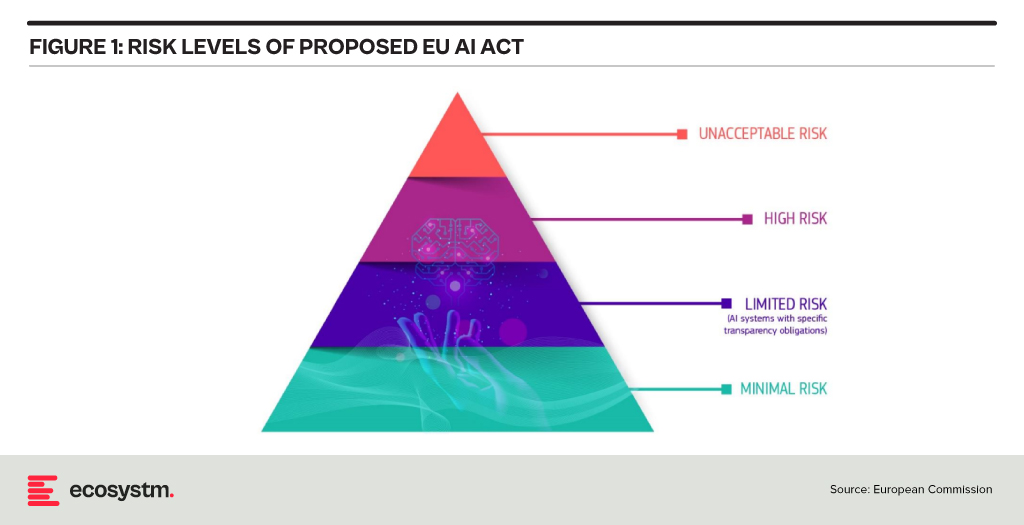

Risk Classification and Regulations in the EU AI Act

At the heart of the AI Act is a system to assess the risk level of AI technology, classify the technology (or its use case), and prescribe appropriate regulations to each risk class.

For each of these four risk levels, the AI Act proposes a set of rules and regulations. Evidently, the regulatory focus is on High-Risk AI systems.

Contrasting Approaches: EU AI Act vs. UK’s Pro-Innovation Regulatory Approach

The AI Act has received its share of criticism, and somewhat different approaches are being considered, notably in the UK. One set of criticism revolves around the lack of clarity and vagueness of concepts (particularly around person-related data and systems). Another set of criticism revolves around the strong focus on the protection of rights and individuals and highlights the potential negative economic impact for EU organisations looking to leverage AI, and for EU tech companies developing AI systems.

A white paper by the UK government published in March 2023, perhaps tellingly, named “A pro-innovation approach to AI regulation” emphasises on a “pragmatic, proportionate regulatory approach … to provide a clear, pro-innovation regulatory environment”, The paper talks about an approach aiming to balance the protection of individuals with economic advancements for the UK on its way to become an “AI superpower”.

Further aspects of the EU AI Act are currently being critically discussed. For example, the current text exempts all open-source AI components not part of a medium or higher risk system from regulation but lacks definition and considerations for proliferation.

Adopting AI Risk Management in Organisations: The Singapore Approach

Regardless of how exactly AI regulations will turn out around the world, organisations must start today to adopt AI risk management practices. There is an added complexity: while the EU AI Act does clearly identify high-risk AI systems and example use cases, the realisation of regulatory practices must be tackled with an industry-focused approach.

The approach taken by the Monetary Authority of Singapore (MAS) is a primary example of an industry-focused approach to AI risk management. The Veritas Consortium, led by MAS, is a public-private-tech partnership consortium aiming to guide the financial services sector on the responsible use of AI. As there is no AI legislation in Singapore to date, the consortium currently builds on Singapore’s aforementioned “Model Artificial Intelligence Governance Framework”. Additional initiatives are already underway to focus specifically on Generative AI for financial services, and to build a globally aligned framework.

To Comply with Upcoming AI Regulations, Risk Management is the Path Forward

As AI regulation initiatives move from voluntary recommendation to legislation globally, a risk management approach is at the core of all of them. Adding risk management capabilities for AI is the path forward for organisations looking to deploy AI-enhanced solutions and applications. As that task can be daunting, an industry consortium approach can help circumnavigate challenges and align on implementation and realisation strategies for AI risk management across the industry. Until AI legislations are in place, such industry consortia can chart the way for their industry – organisations should seek to participate now to gain a head start with AI.